Why Most AI Initiatives Stall and How Better Opportunity Selection Fixes That

How mid-market leaders can move from AI pilots to operational impact.

Executive Summary

Across industries, mid-market companies are experimenting with AI, yet few achieve sustained operational results. Most initiatives stall not because the technology fails, but because opportunity selection is weak, workflow fit is poor, or value is never clearly defined. The path forward is not more experimentation, but more disciplined selection of AI opportunities that are feasible, measurable, and embedded into daily work.

The operational problem

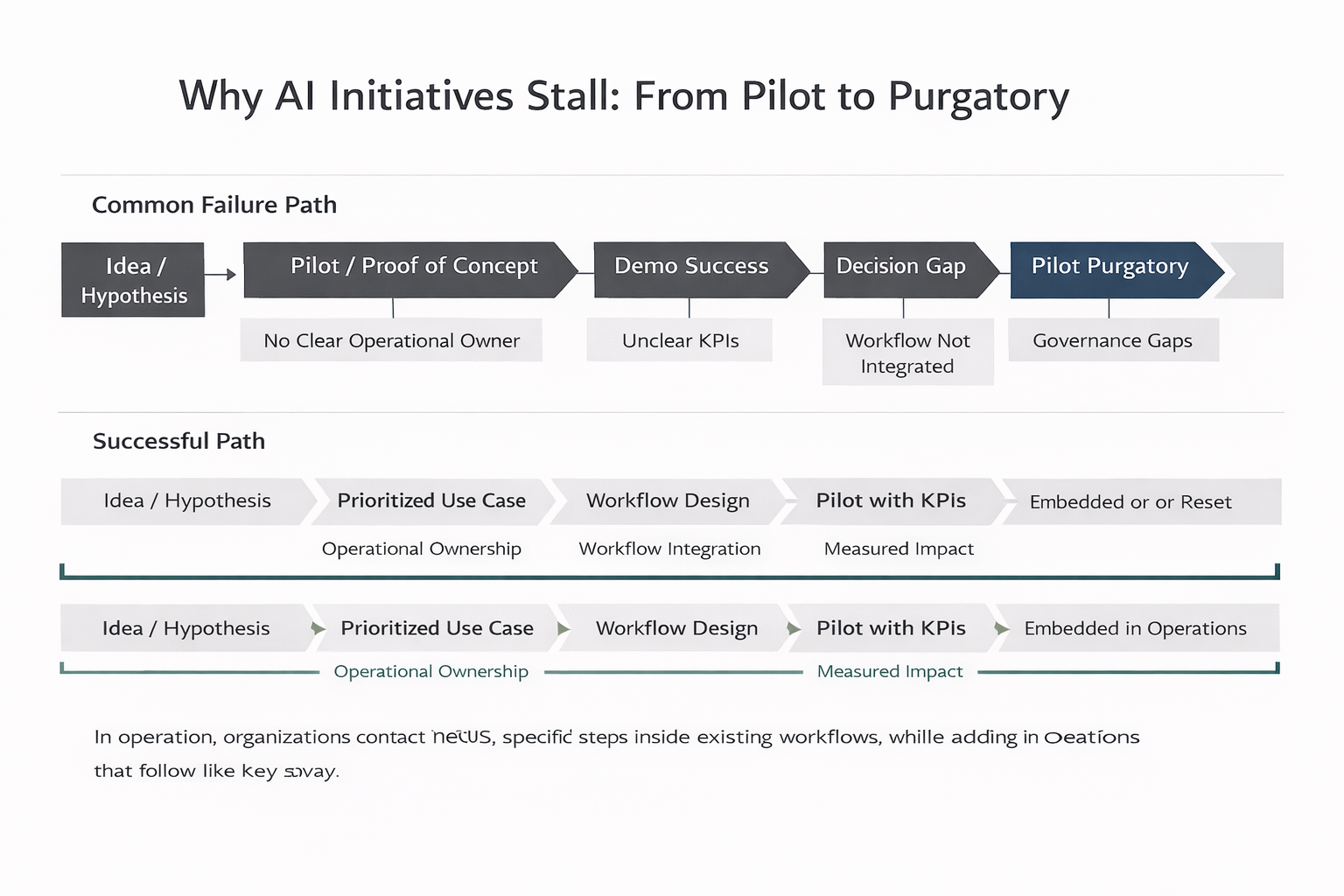

Why AI Initiatives Stall — From Pilot to Purgatory

Most mid-market organizations are not failing to build AI pilots. They are failing to turn those pilots into standard operating practice.

A familiar pattern appears across logistics firms, healthcare providers, professional services companies, and manufacturers:

- A small team launches an AI pilot, often with outside help

- The demo looks promising. Accuracy is acceptable. Leadership is interested

- Then progress slows. Ownership is unclear. The tool lives outside core systems

- The business case remains vague. Six months later, momentum fades

This is often called pilot purgatory: a solution that technically works, but never becomes part of how work actually gets done.

In operational environments, this gap is even wider. Real workflows are messy. Data is fragmented. Exceptions are common. A model that performs well in isolation often struggles once exposed to real-world variability.

The core issue is not model quality. It is the absence of a clear operational pathway from idea to embedded capability.

Why this matters

When AI initiatives stall, the impact is not neutral. It shows up directly in performance, cost, and risk.

Efficiency and throughput

Manual work stays manual. Intake queues grow. Exceptions are handled case by case. Teams spend time searching for information instead of acting on it. In service-heavy operations, this translates into longer cycle times and lower throughput.

Cost and capacity

Mid-market organizations cannot afford prolonged experimentation. Every stalled initiative consumes leadership attention, subject matter expertise, and IT capacity. Misallocated spend has an outsized impact on results.

Customer experience

Operational friction is visible to customers. Late shipment updates, slow appointment scheduling, inconsistent responses, and repeated follow-ups erode trust. AI that never reaches production does nothing to fix these issues.

Risk and compliance

Stalled initiatives often lead to fragmented tool usage without governance. Teams experiment independently, increasing risk around data handling, auditability, and consistency.

Competitive position

Organizations that operationalize AI gain compounding advantages: lower cost-to-serve, faster response times, and more consistent quality. Those stuck in pilot mode fall behind even peers of similar size.

How AI applies explained simply and practically

Where AI Actually Helps in Operations

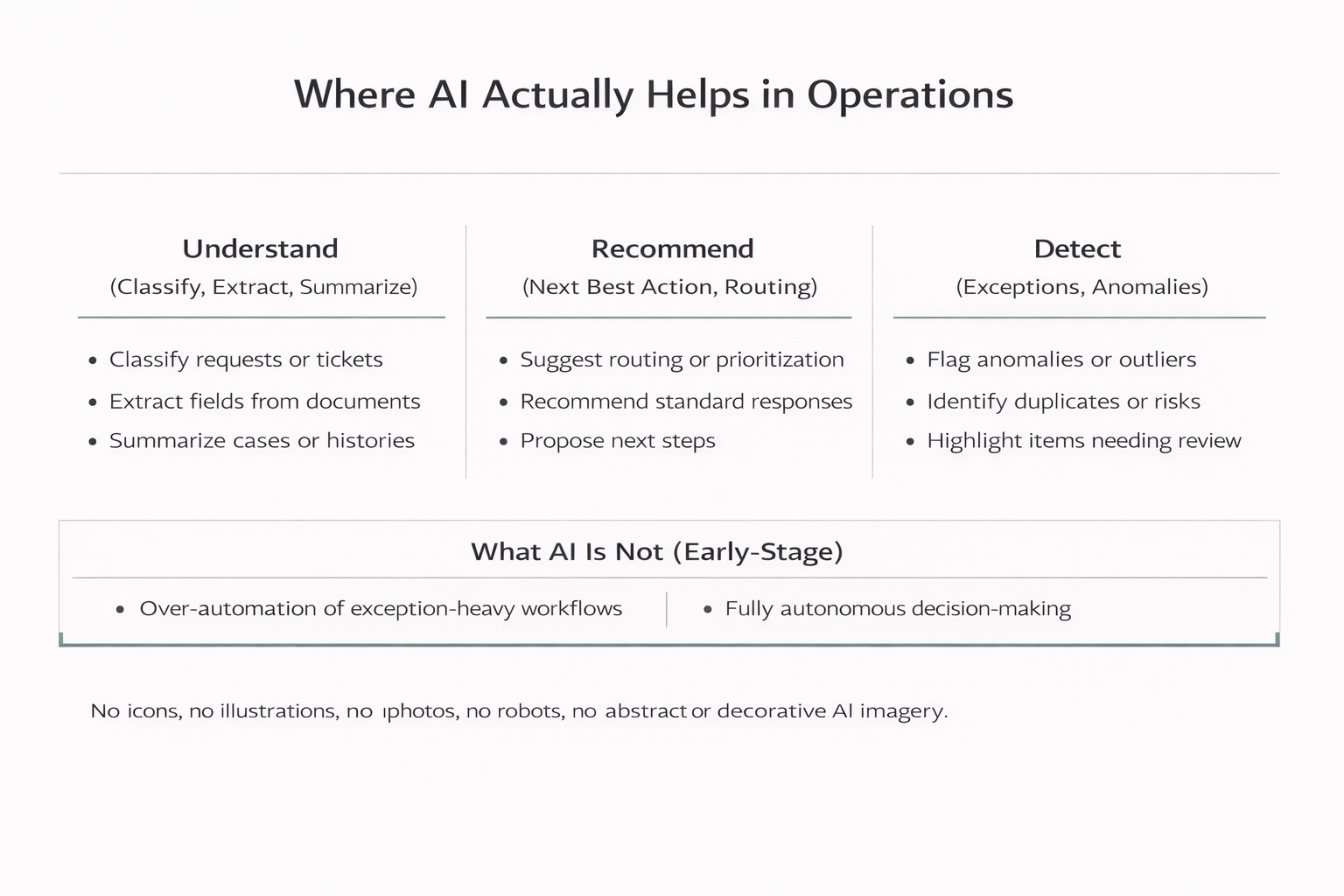

In operations, AI is most effective when it accelerates specific steps inside existing workflows. It is not about replacing departments or running the business autonomously.

Practically, AI adds value in three ways:

Understand

AI can classify, extract, and summarize information at scale: classifying inbound emails or tickets by type and urgency, extracting structured fields from documents such as invoices or forms, and summarizing case histories or customer interactions into clear handoffs.

Recommend

AI can suggest next actions within defined boundaries: recommending routing based on content and context, suggesting standard checklists or responses based on similar cases, and highlighting which items deserve escalation.

Detect

AI can flag anomalies and exceptions for human review: identifying duplicate invoices or unusual billing patterns, flagging shipments at risk based on scan behavior, and detecting records outside normal thresholds.

What AI is not, especially early on, is a fully autonomous decision-maker. Over-automation in exception-heavy workflows almost always erodes trust. Successful programs start with assistive AI that works alongside people.

Realistic operational examples from non-tech industries

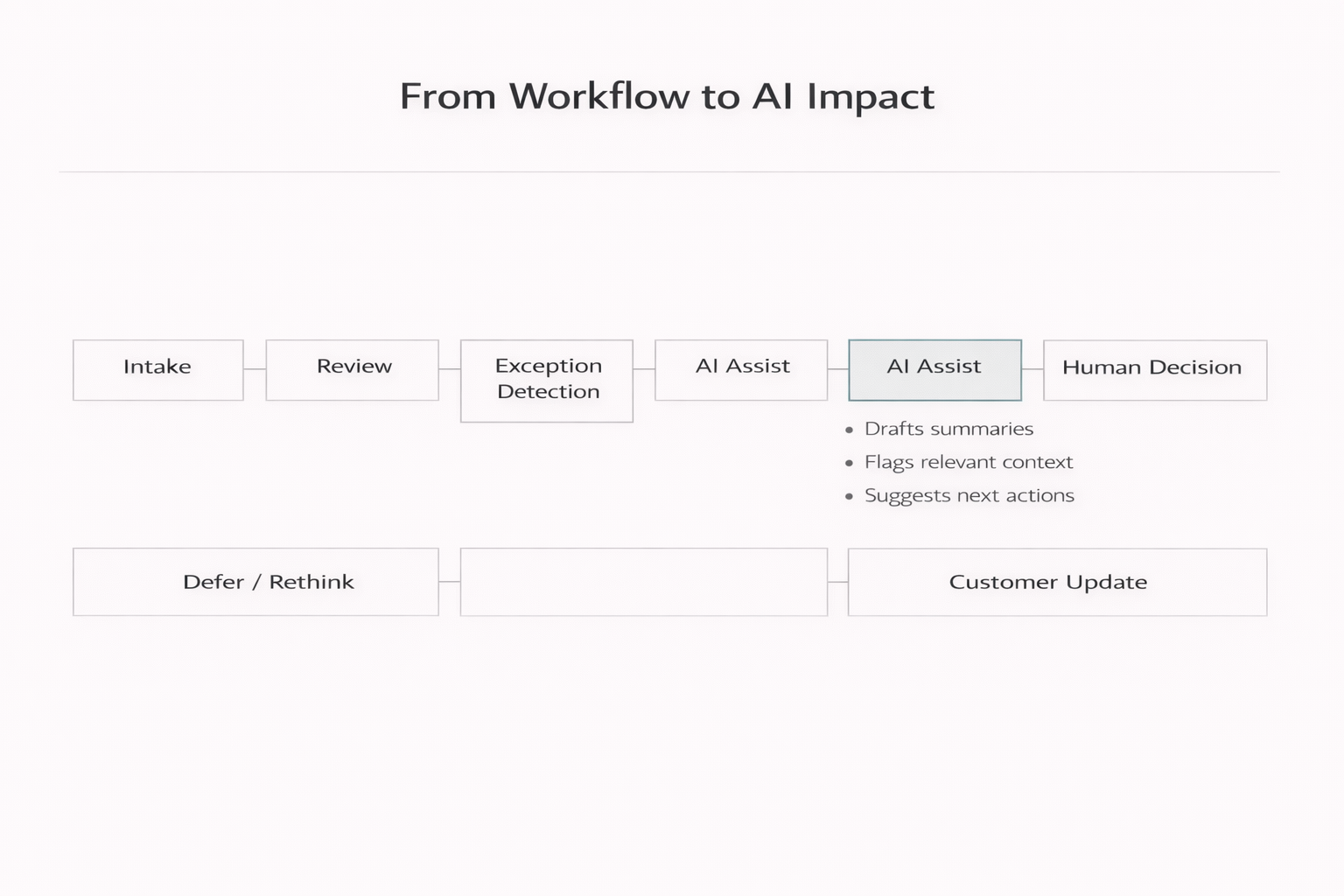

From Workflow to AI Impact

Logistics: exception handling and customer updates

A regional logistics provider struggled with shipment exceptions. Customer service teams spent hours searching across systems to understand delays and craft updates.

The AI use case was narrow and focused. The system pulled event data and related emails, summarized shipment status, drafted a customer update, and flagged cases requiring escalation. Human agents reviewed and sent the message.

The result was faster response times, fewer inbound status calls, and more consistent communication. Adoption was high because AI outputs were embedded directly into existing CRM and TMS workflows.

Healthcare administration: intake and prior authorization support

A multi-clinic healthcare organization faced administrative bottlenecks in intake and prior authorization preparation. Staff manually reviewed documents, checked for missing information, and assembled packets.

AI was used to extract required fields, identify missing documents, and generate structured checklists. All outputs were reviewed by staff before submission.

This reduced rework loops and improved cycle times without touching clinical decision-making. This matters because administrative costs account for roughly 25% of total healthcare spending.

Field services: job notes and dispatch support

A field services company struggled with inconsistent technician notes and repeat visits.

AI summarized job notes, categorized issues, and suggested parts or follow-up actions for dispatch teams. The system supported decisions rather than automating them. Over time, repeat visits declined and first-time fix rates improved.

Because the AI worked inside the existing field service management system, adoption was steady and sustained.

Recommended implementation steps for companies

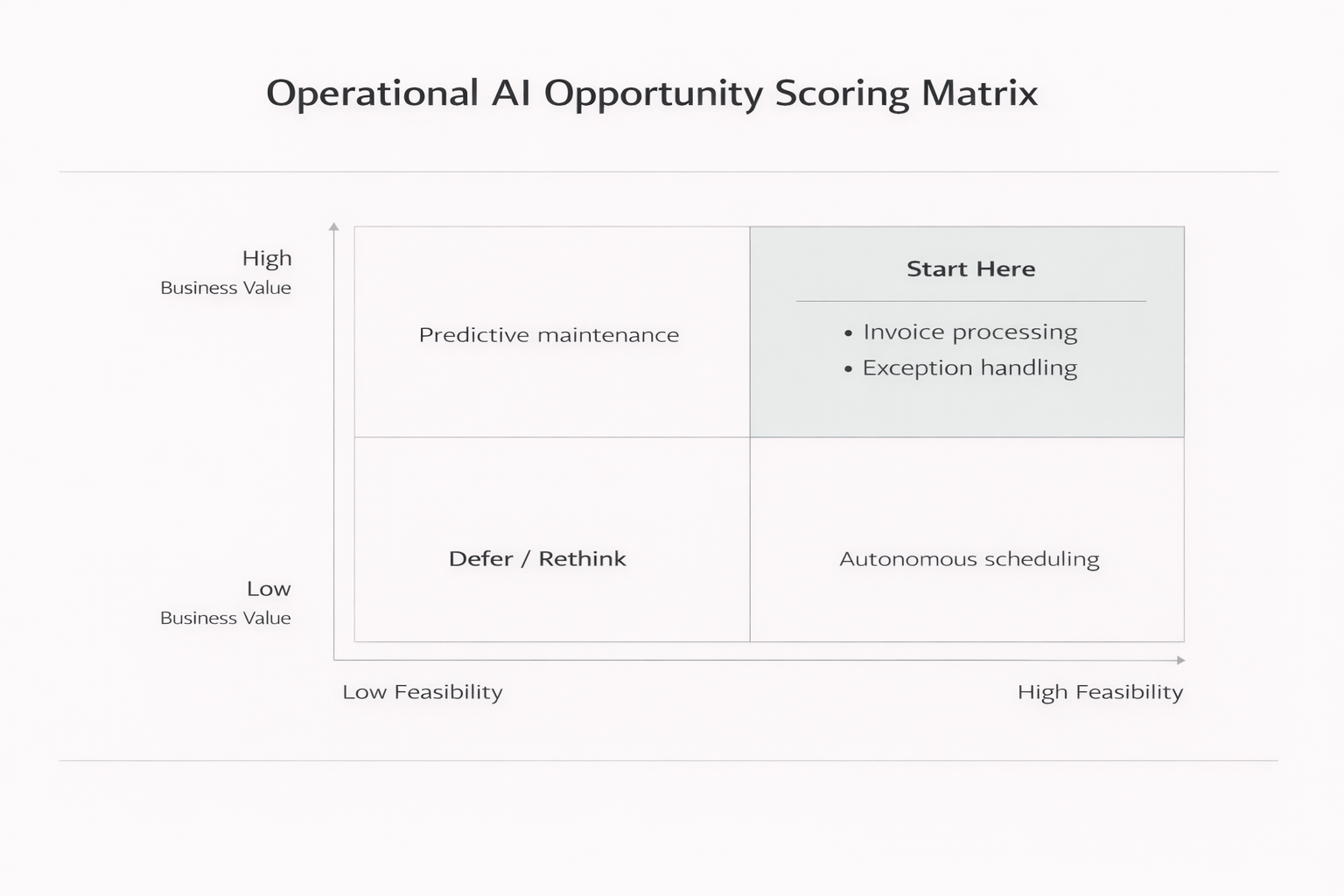

Operational AI Opportunity Scoring Matrix

Identify operational friction

Look for manual work, bottlenecks, high-volume exceptions, and rework loops. These areas usually offer better AI opportunities than novel or experimental ideas.

Score opportunities

Evaluate each use case on business value (volume, cost of delay, error impact) and feasibility (data availability, workflow clarity, integration effort). Prioritize high-value opportunities with reasonable feasibility.

Define success before building

Baseline current performance. Set clear targets such as reduced handling time or improved SLA performance. Without this, ROI discussions remain abstract.

Design for workflow fit

Map where AI fits, where humans review, and how exceptions are handled. Embed AI into existing systems whenever possible.

Pilot narrowly and measure weekly

Start with one team or workflow slice. Track usage, outcomes, and feedback. Iterate quickly.

Productionize deliberately

Add monitoring, access controls, audit logs, and clear ownership. Treat AI as part of the operational system, not a side project.

Common pitfalls and how to avoid them

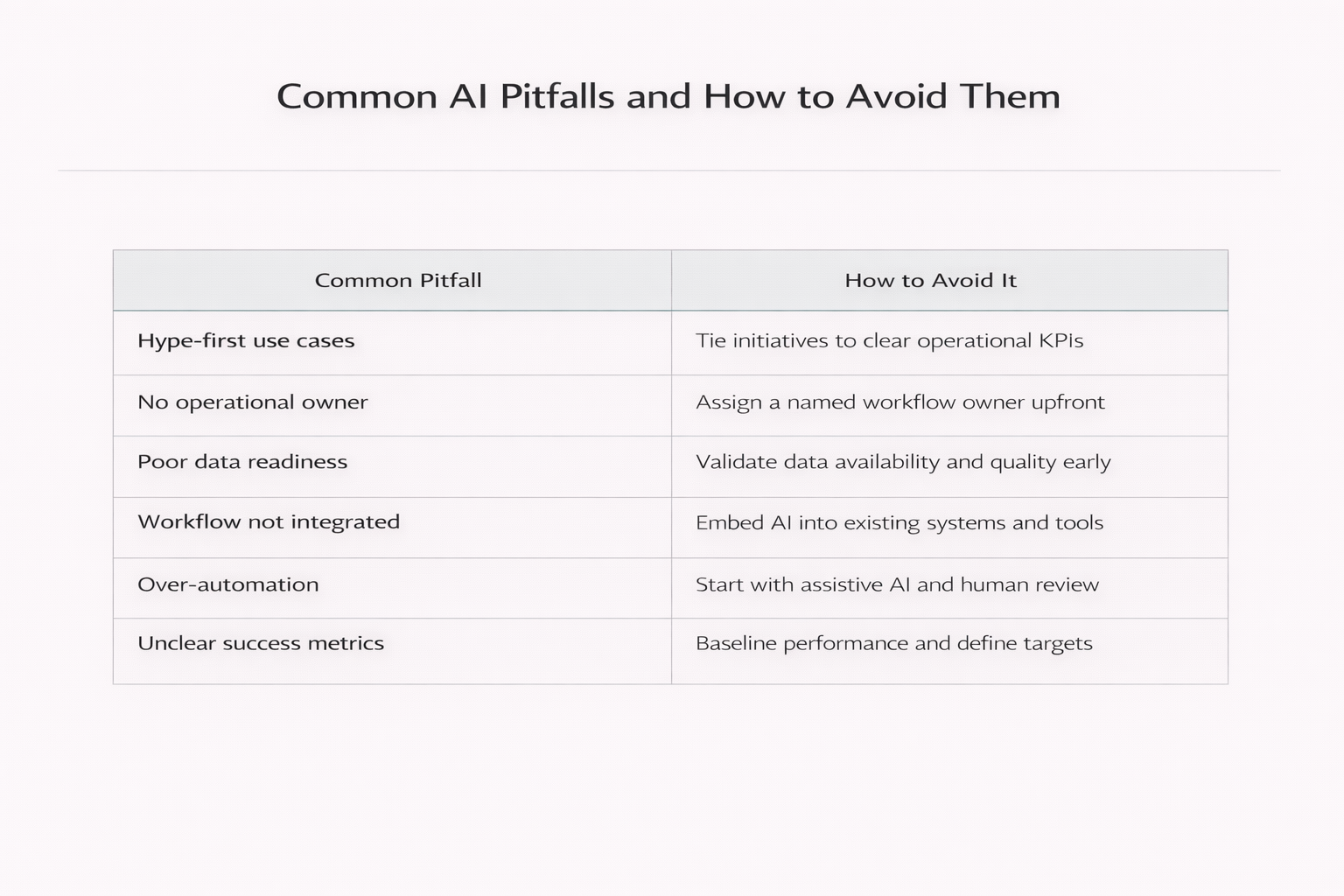

Common AI Pitfalls and How to Avoid Them

Hype-first use cases

Symptom: ROI is described using buzzwords rather than metrics.

Avoidance: Require a clear KPI and owner before approving a pilot.

Poor data readiness

Symptom: The pilot spends months cleaning data.

Avoidance: Validate data availability and quality upfront.

Workflow mismatch

Symptom: Users must copy and paste into separate tools.

Avoidance: Embed AI into existing systems and routines.

Unclear ownership

Symptom: No one owns scaling decisions after the pilot.

Avoidance: Assign a business owner from day one.

Over-automation

Symptom: Exceptions overwhelm the system and trust erodes.

Avoidance: Start with assistive AI and clear escalation paths.

Closing: moving from experimentation to impact

Most mid-market companies do not need more AI ideas. They need fewer, better-selected ones. When AI initiatives are tied to real operational pain points, designed for workflow fit, and measured against clear outcomes, they are far more likely to reach production and deliver value.

Key Takeaways for Business Leaders

- Most AI pilots fail not due to technology, but due to weak opportunity selection and poor workflow fit

- Pilot purgatory is an operational failure, not a technical one

- AI works best when it accelerates specific steps inside existing workflows

- Start with operational friction: manual work, bottlenecks, exceptions, and rework loops

- Define success before building—baseline current performance and set clear targets

- Embed AI into existing systems and assign ownership from day one

Ready to Move Beyond Pilot Mode?

Sentia Digital’s AI Opportunity Assessment helps teams identify high-value use cases, validate feasibility, and define a clear path from idea to operational impact.

Explore the Assessment →