Why Most Support Copilots Fail and What the Successful Ones Do Differently

A practical, operations-first guide to building customer support copilots that improve agent productivity, service quality, and cost to serve—without creating new risks.

Executive Summary

Customer support copilots promise faster responses, lower costs, and better agent experiences. In practice, however, most initiatives stall after a pilot phase and never deliver sustained operational impact.

The underlying causes are rarely model quality or AI capability; instead, they are weak workflow integration, poor data grounding, unclear ownership, and limited change management.

Organizations that succeed treat copilots as Operational AI systems, not standalone tools, and design them around real agent workflows, measurable KPIs, and production-ready governance.

What Do We Mean by a Support Copilot?

Before examining why support copilots fail, it is important to establish clear definitions. Much of the disappointment around copilots stems from ambiguous language and inflated expectations.

- Support Copilot — An AI assistant that helps customers and/or agents complete support tasks faster and more consistently by retrieving information, drafting content, summarizing interactions, and triggering workflow steps, with appropriate human oversight.

- Operational AI — AI embedded directly into live business workflows, measured against operational KPIs, and supported by monitoring, governance, and continuous improvement.

- AI Opportunity — A clearly defined workflow-level problem where AI can deliver measurable improvements in speed, quality, or cost, with a realistic path to data access, integration, and adoption.

- AI Pilot — A time-boxed, controlled deployment designed to validate business value and feasibility before scaling.

- Feasibility — The practical ability to implement and sustain a use case given data readiness, integration complexity, security and compliance constraints, organizational change capacity, and ongoing operating costs.

- Orchestration Platform — The layer that connects AI capabilities to core business systems such as CRM, ticketing, telephony, and knowledge bases.

What Is Actually Going Wrong in Support Operations?

Why Support Copilots Fail—From Demo to Pilot Purgatory

Support leaders face increasing pressure to reduce cost to serve while improving service quality and agent retention. Copilots are often introduced as a solution, yet many struggle once they leave the demo environment.

In real operations, support teams typically deal with:

- Multiple disconnected systems (CRM, ticketing, chat, email, knowledge bases)

- Significant after-call and after-chat work

- Fragmented or outdated knowledge

- Long onboarding and ramp-up periods for new agents

Copilots fail when they:

- Sit outside the agent’s primary workflow, forcing context switching

- Generate outputs that require extensive verification, creating a verification tax

- Lack access to relevant customer, case, or entitlement context

- Are not grounded in approved knowledge, increasing error and compliance risk

The predictable outcome is low agent adoption, limited KPI movement, and repeated pilots that never reach production.

Why This Matters to Business Leaders

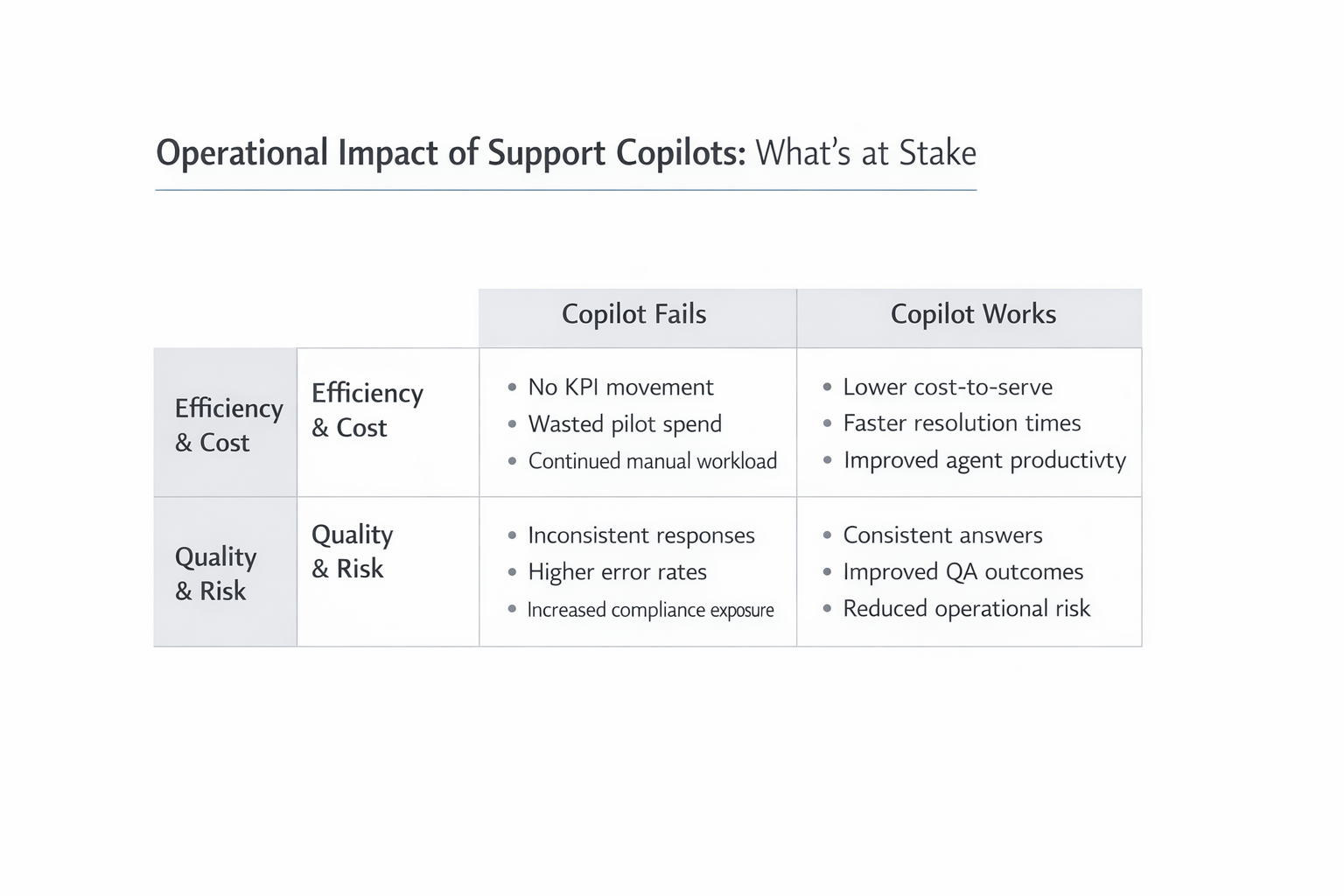

Operational Impact of Support Copilots—What’s at Stake

Support copilots are not experimental side projects. They directly influence efficiency, cost structure, customer experience, and risk exposure.

Efficiency and productivity

These improvements are driven primarily by faster retrieval, drafting, and summarization.

Cost to serve

Labor remains the largest cost driver in support. Even modest reductions in handle time or after-call work compound significantly at scale. Failed pilots, by contrast, represent sunk cost and delayed value.

Customer experience

Faster first response and quicker resolution improve satisfaction and reduce escalations. Intercom reports measurable improvements in response times and consistency when AI assists agents.

Quality and compliance

In regulated industries, inconsistent or inaccurate responses increase exposure. Copilots grounded in approved content improve consistency, while ungrounded tools amplify risk.

Strategic risk

McKinsey’s research shows that organizations that operationalize AI outperform peers that remain stuck in experimentation, particularly in customer-facing operations.

How AI Applies in Practice

The most effective support copilots focus on a small number of high-confidence tasks where AI delivers reliable, repeatable value.

Core copilot capabilities

- Summarize — Automatically generate after-call or after-chat summaries, customer history briefs, and escalation notes. This reduces documentation time and improves handoffs.

- Retrieve and ground — Surface relevant policies, knowledge base articles, and prior cases using approved sources. Retrieval-augmented generation constrains AI outputs to known content.

- Draft — Provide suggested responses and templates that agents review and edit. Drafting accelerates routine communication while keeping humans in control.

- Triage — Categorize and route tickets, detect urgency, and suggest next steps based on historical patterns.

- Orchestrate — Trigger workflow actions such as creating tickets, updating fields, or notifying downstream teams through integration with core systems.

What successful copilots do differently

- Workflow-first design, not chat-first design

- Embedded delivery inside CRM or ticketing tools

- Guardrails such as confidence thresholds and explicit “I do not know” behavior

- Feedback loops to improve over time

- Auditability and monitoring from day one

Realistic Examples from Non-Tech Industries

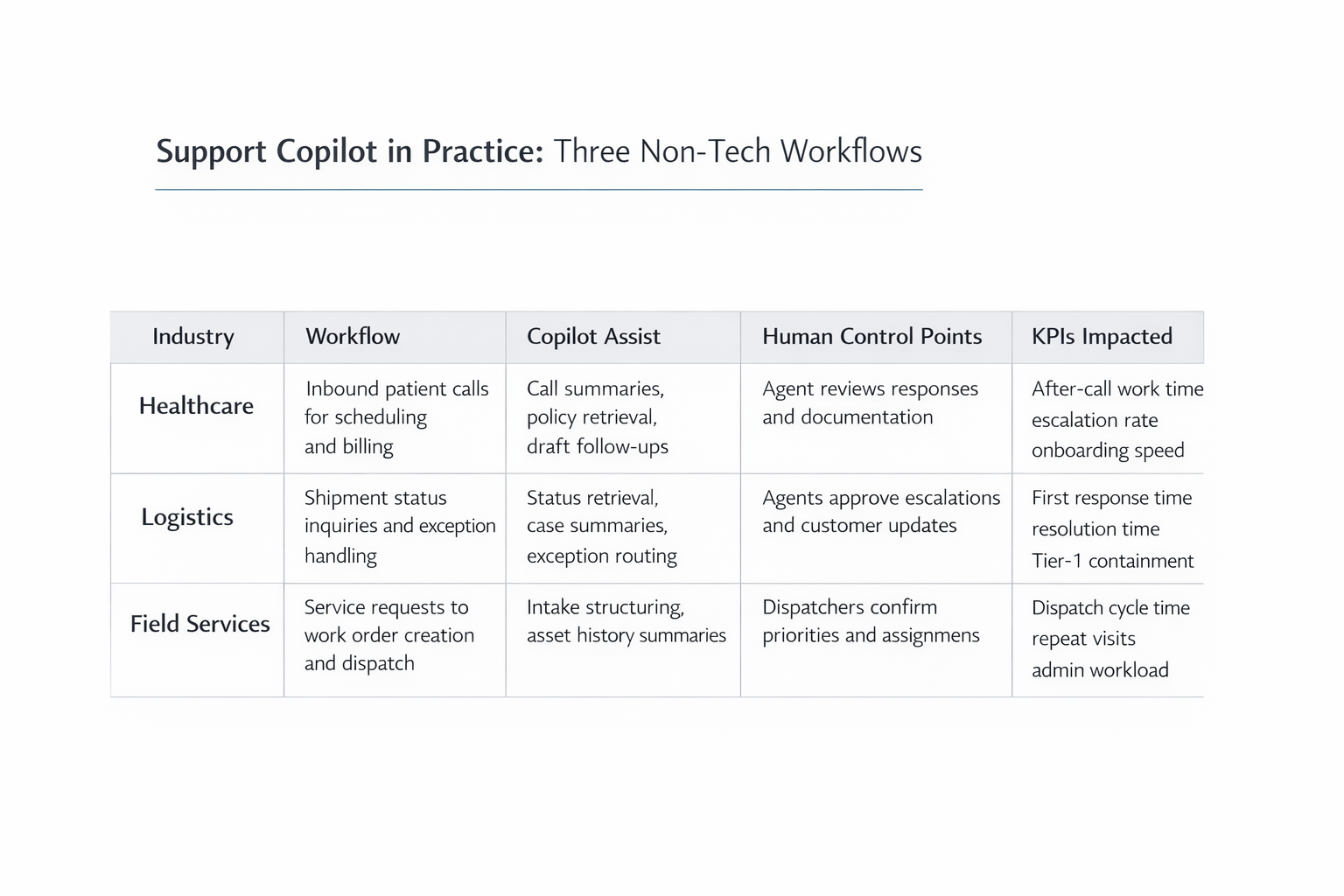

Support Copilot in Practice—Three Non-Tech Workflows

Support copilots are delivering value well beyond technology companies when applied with discipline.

Healthcare provider call center

A regional healthcare system implemented an agent-facing copilot to summarize patient calls and retrieve billing and scheduling policies. Clinicians retained full control over patient-facing decisions.

Logistics and transportation

A logistics provider deployed a hybrid copilot. Customer-facing AI handled routine shipment status questions, while agent-facing AI summarized shipment history and exceptions.

Field services dispatch

A field services company used a copilot to convert unstructured service requests into structured work orders and summarize asset history for dispatchers.

When a Support Copilot Is Not the Right Approach

Not every support scenario is a good candidate for AI augmentation. Copilots may be inappropriate when:

- Decisions carry high risk and low tolerance for error, such as legal rulings or medical advice

- There is no reliable source of truth for policies or procedures

- Core systems lack integration options and modernization is not planned

- The organization cannot support training and change management

- The initiative is framed primarily as headcount reduction rather than augmentation

In these cases, foundational issues should be addressed first, or AI should be limited to narrow, low-risk tasks.

Recommended Implementation Steps

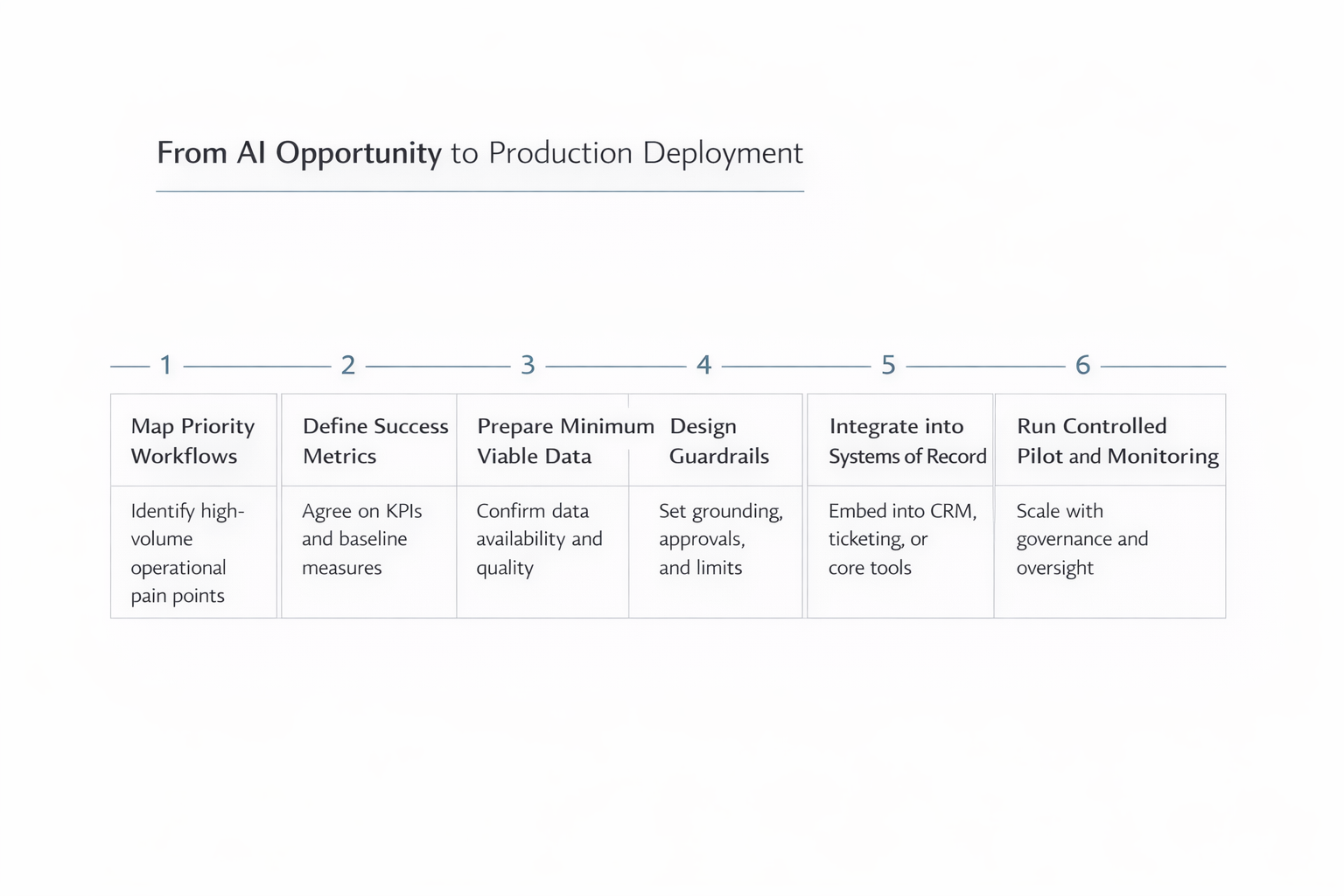

From AI Opportunity to Production Deployment

Organizations that succeed follow a structured path from opportunity identification to production deployment.

Map priority workflows

Identify high-volume, high-friction tasks such as summaries or knowledge search.

Define success metrics upfront

Select KPIs such as handle time, after-call work, first contact resolution, adoption rate, and quality scores.

Prepare minimum viable data

Establish authoritative knowledge sources and resolve obvious gaps.

Design for grounding and guardrails

Use approved content, confidence thresholds, and audit logs.

Integrate into systems of record

Embed copilots where agents already work and orchestrate actions through existing platforms.

Run a controlled pilot

Limit scope, use real data, and review results weekly over four to eight weeks.

Plan for production

Assign ownership, implement monitoring, and expand gradually once KPIs are proven.

Common Pitfalls and How to Avoid Them

- Tool not embedded in workflow — Integrate directly into CRM or ticketing tools.

- High verification burden — Use grounded retrieval and narrow early scope.

- Fragmented knowledge — Establish a single source of truth with clear ownership.

- No KPI definition — Baseline and track metrics weekly.

- Weak change management — Train agents and position AI as augmentation.

- Overreach into high-risk decisions — Keep humans firmly in control.

- Scale surprises — Model cost and performance early.

- Unclear governance — Define ownership and operating model upfront.

Key Takeaways for Business Leaders

- Most support copilots fail due to workflow and governance issues, not AI capability

- Successful copilots focus on narrow, high-volume tasks such as summarization, retrieval, and drafting

- Embedding AI into existing systems is essential for adoption

- Grounding, guardrails, and human control reduce risk and build trust

- Measurable KPIs and disciplined pilots are required to reach production

- A structured AI Opportunity Assessment helps prioritize the right starting points and design for scale

Executive FAQ

Will a support copilot replace agents?

No. Effective copilots augment agents by removing low-value work and improving consistency. Human judgment remains essential.

How long does it take to see value?

Well-scoped pilots often show measurable improvements within four to eight weeks.

What is the biggest risk leaders underestimate?

Change management. Even well-designed copilots fail if agents are not trained, involved, and supported.

Ready to Build a Support Copilot That Actually Works?

Sentia Digital helps organizations identify high-impact support workflows and define a clear, feasible path from pilot to production.

Start an AI Opportunity Assessment →