A Simple AI Scoring Model Leaders Can Actually Use to Prioritize Operational Use Cases

A practical, executive-friendly framework for comparing AI opportunities objectively and selecting pilots that can reach production.

Executive Summary

Mid-market companies are not short on AI ideas. They are short on a reliable way to decide which ideas deserve investment. Without a clear prioritization method, many organizations fall into pilot purgatory—where technically successful demos never translate into operational value. A simple impact–feasibility scoring model helps leaders compare AI opportunities consistently, avoid pet-project bias, and focus limited resources on pilots that can realistically scale.

What Do We Mean by “Operational AI” and an “AI Pilot”?

Before discussing prioritization, it is essential to align on terminology. Much confusion and wasted effort stems from teams using the same words to mean different things.

Operational AI — AI embedded in live business workflows, producing repeatable outcomes in day-to-day operations. Not a demo or standalone experiment.

AI Opportunity — A scoped business problem where AI could measurably improve a workflow outcome—cost, cycle time, accuracy, or risk.

AI Pilot — A time-boxed implementation designed to validate impact and feasibility using real data, users, and defined KPIs.

Impact — Expected business value if the AI opportunity succeeds—financial benefit, efficiency gains, or risk reduction.

Feasibility — Practical ability to deliver given current data quality, integrations, skills, governance, and time-to-value.

Orchestration Platform — Technology connecting AI models to workflows, monitoring, and governance for reliable production operation.

Why Do AI Initiatives Stall After the First Demo?

Most mid-market AI initiatives fail for reasons that have little to do with algorithms or models. Teams start with enthusiasm, build a pilot, get a promising demo—then progress stalls.

- No clear operational ownerAfter the pilot phase, nobody takes accountability for adoption

- Success measured by model accuracyNot business outcomes that matter to stakeholders

- Missing workflow integrationLeaving users unsure how to adopt the solution

- Unclear decision authorityOn whether to scale or stop the initiative

The root cause is not immature technology. It is weak decision discipline.

What Does Poor Prioritization Cost the Business?

Efficiency and Productivity

Teams invest months in pilots that never reach production while manual processes remain untouched. Repeated false starts lead to fatigue and skepticism.

Cost and ROI

AI budgets, scarce data talent, and leadership attention are diluted across too many initiatives.

Customer Experience

High-value workflows affecting response times and accuracy are deprioritized for more visible but lower-impact projects.

Risk and Compliance

Poorly chosen initiatives surface privacy, bias, or auditability issues late, increasing regulatory exposure.

For mid-market leaders, AI prioritization is not a technical exercise. It is capital allocation and risk management.

Where Does AI Realistically Help in Operations Today?

AI creates value when applied to specific operational patterns, not broad aspirations.

- Automation & Augmentation — Document processing, ticket classification, summarization. Reduces manual effort while keeping humans in the loop.

- Prediction & Forecasting — Demand forecasting, failure prediction, fraud detection, risk scoring beyond human capacity.

- Decision Support — AI recommendations in existing tools with humans making final decisions.

- Workflow Integration — AI outputs triggering routing, prioritization, or approvals in operational systems.

The differentiator is not model sophistication. It is whether AI improves a measurable workflow outcome.

How Do Leaders Compare AI Opportunities Objectively?

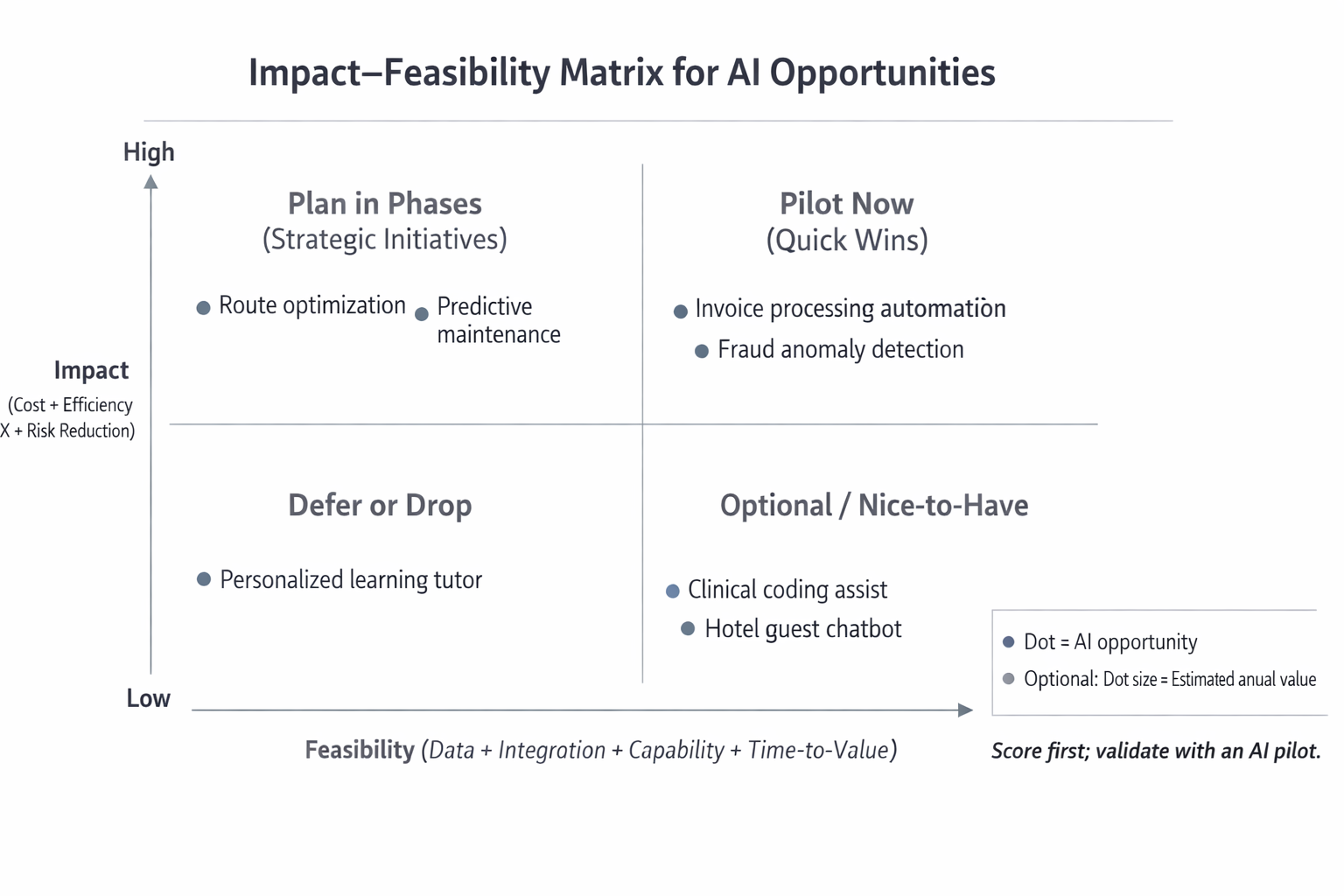

A simple impact–feasibility matrix gives leaders a shared, neutral way to compare AI opportunities using a 1 to 5 scale.

Impact considers:

- Size of the business problem

- Expected KPI improvement

- Financial or risk-related value

- Strategic relevance

Feasibility considers:

- Data availability and quality

- Integration complexity

- Internal capabilities

- Time-to-value

Impact–Feasibility Matrix for AI Opportunities

The goal is not perfect prediction. The goal is explicit tradeoffs.

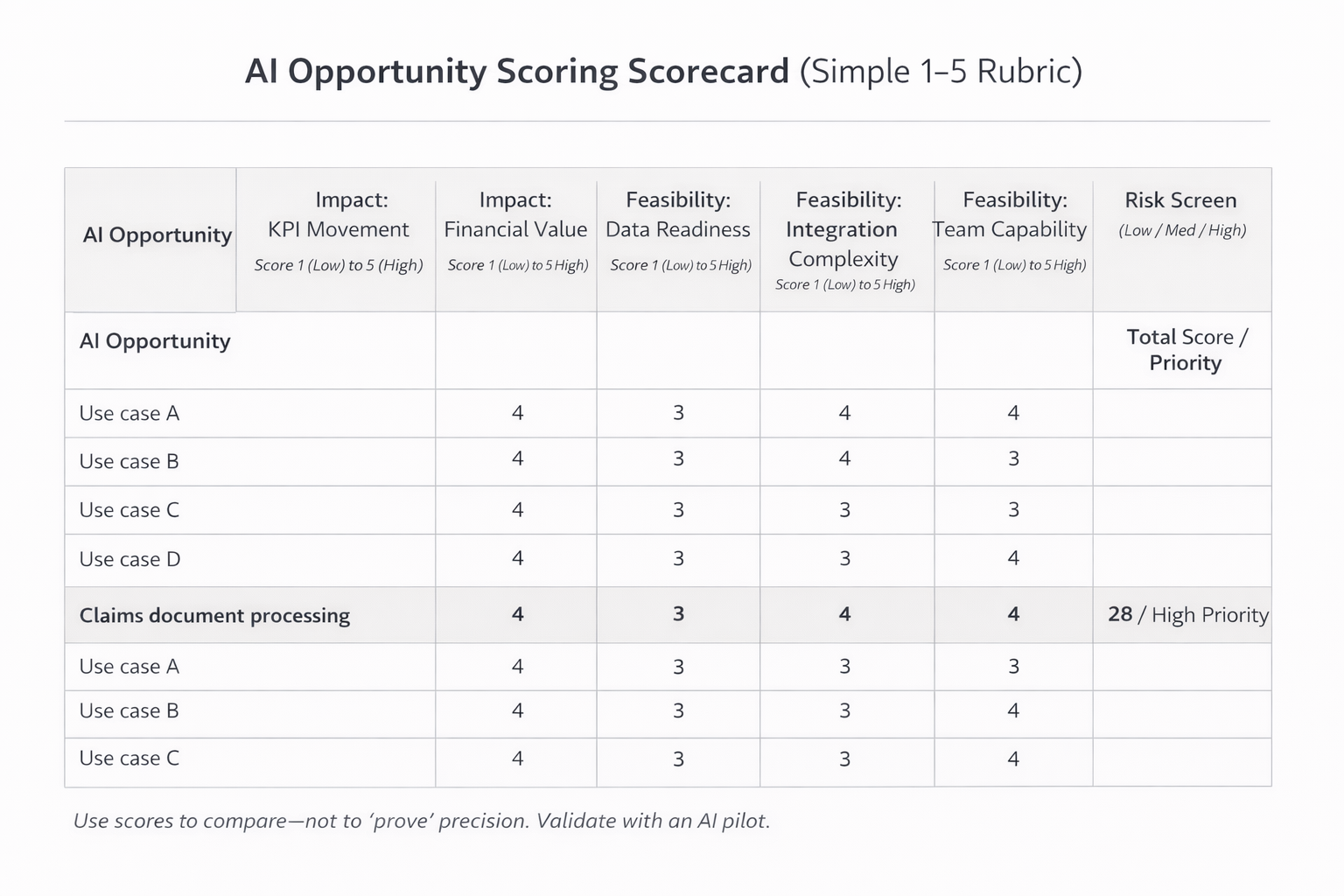

What Does a Practical Scoring Scorecard Look Like?

To remain usable, scoring must stay simple—including impact criteria, feasibility criteria, and a risk screen.

AI Opportunity Scoring Scorecard (Simple 1–5 Rubric)

Scoring should occur in a cross-functional session with business, operations, IT, and finance. Precision matters less than consistency.

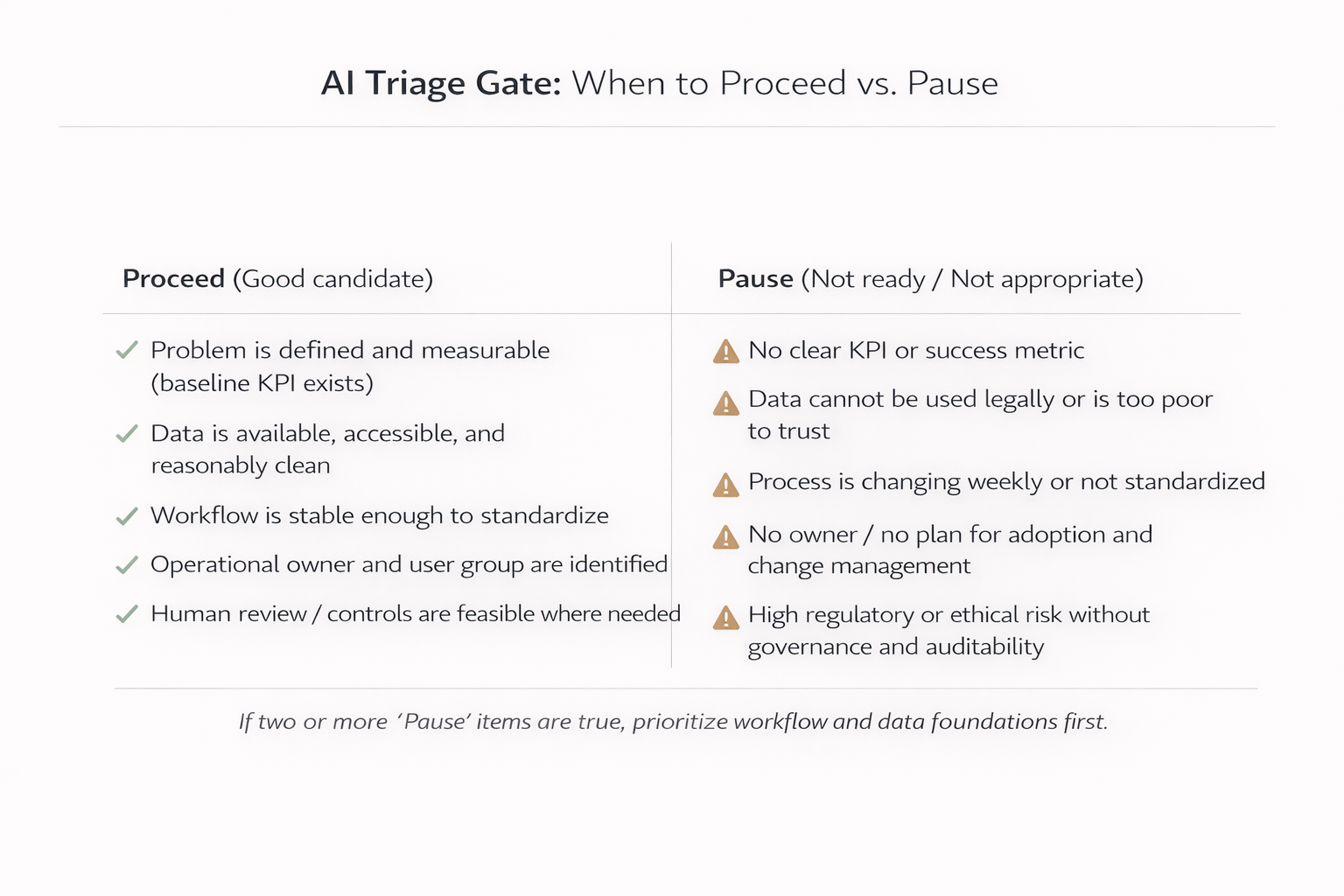

When Should Leaders Not Pursue AI or Pause?

AI is not always the right answer, even when the technology is available.

AI Triage Gate: When to Proceed vs. Pause

In these cases, strengthening process discipline or data foundations delivers more value than forcing AI.

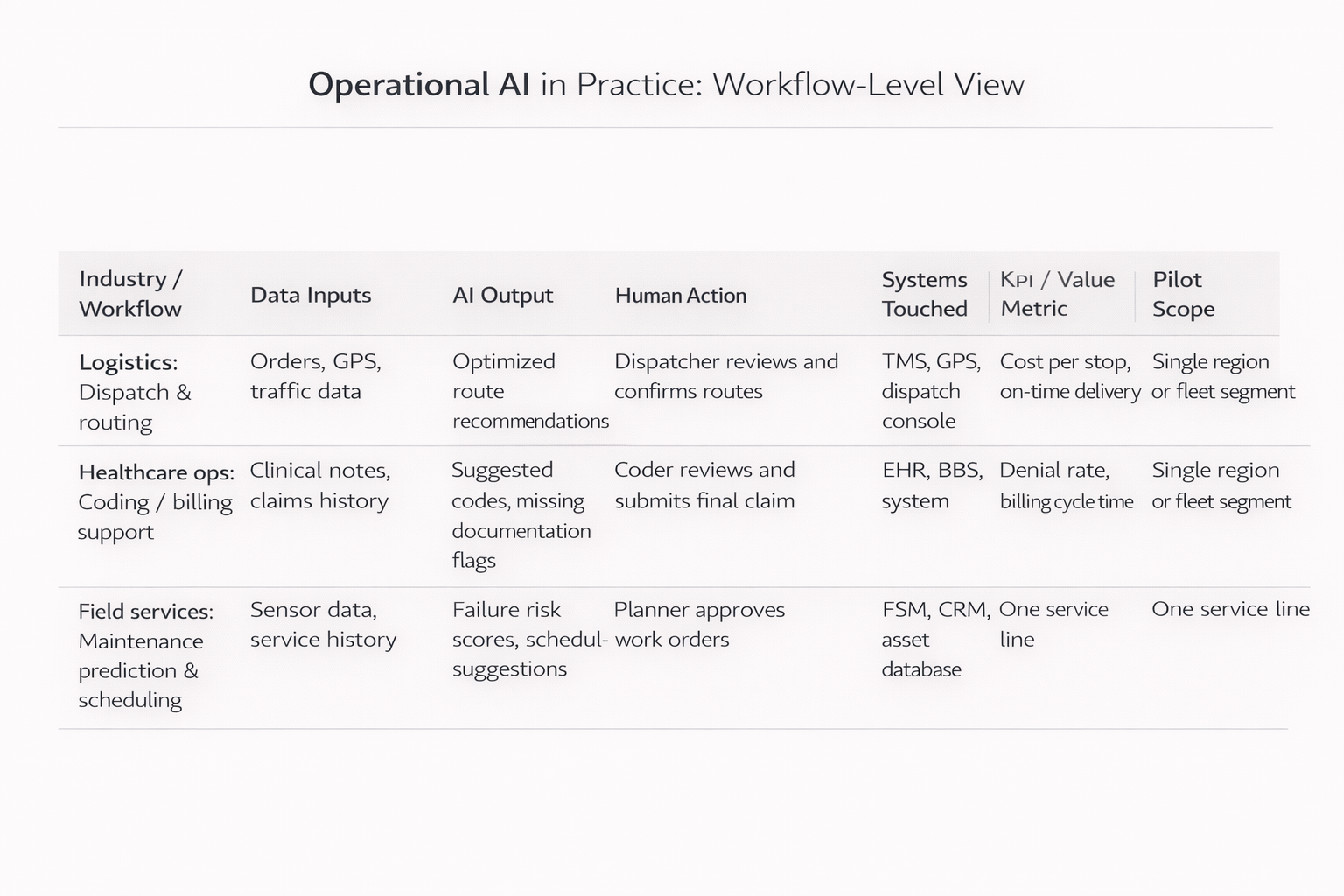

What Does This Look Like in Real Operations?

Operational AI in Practice: Workflow-Level View

Logistics and Transportation

Healthcare Operations

Field Services

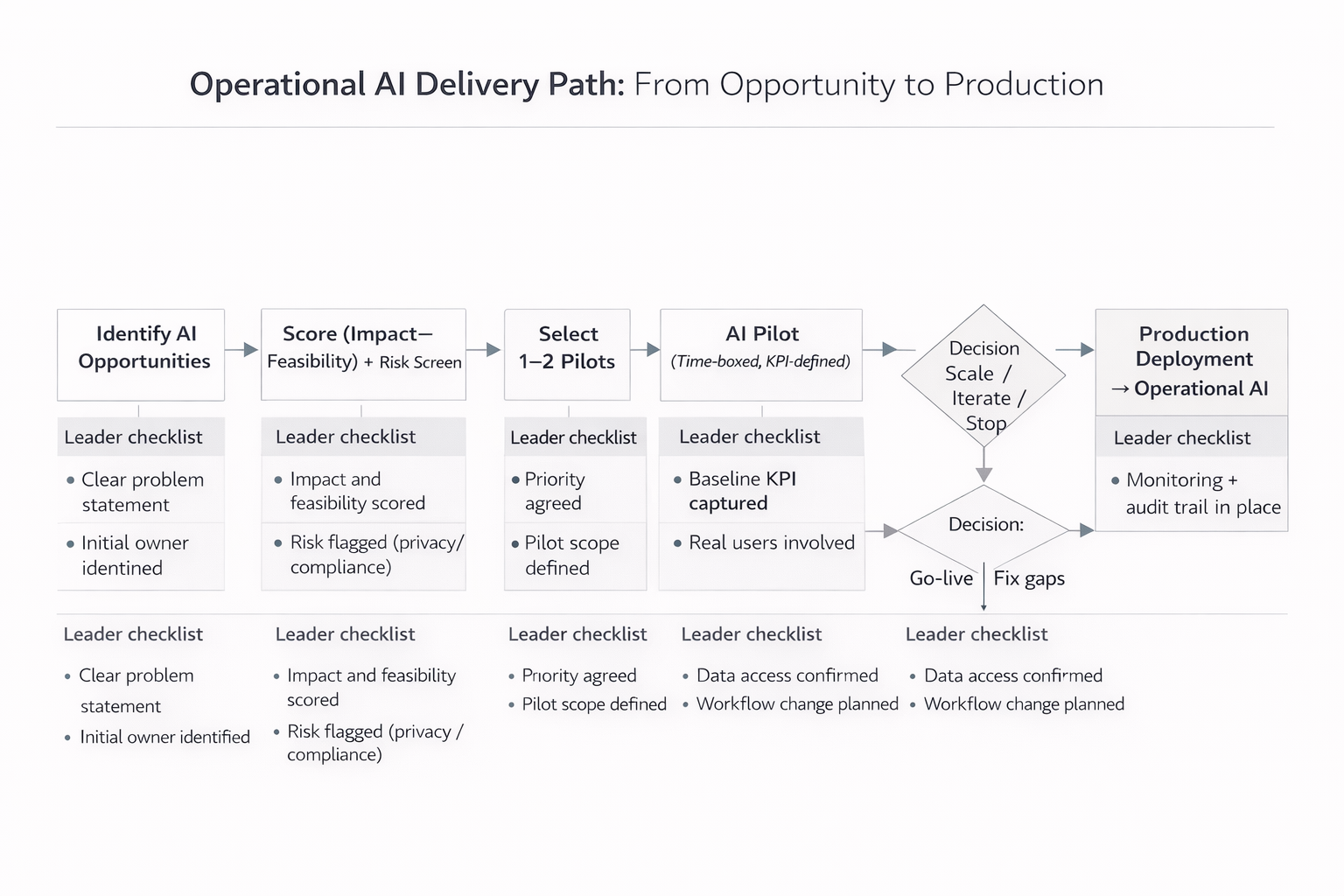

What Steps Should Leaders Follow to Select the Right Pilot?

From Opportunity to Production Deployment

What Should Leaders Watch Out For?

Common pitfalls include overstating impact and understating feasibility, treating pilots as experiments rather than change initiatives, ignoring adoption, governance, and integration, running too many pilots in parallel, and allowing sunk costs to override evidence.

Research consistently shows that organizational and process issues, not model performance, account for most AI failures.

Key Takeaways for Business Leaders

- AI success depends more on prioritization than technology

- Impact and feasibility provide a shared decision framework

- Pilots should be designed with production in mind from day one

- Most AI value comes from improving existing workflows

- Saying no to low-value AI ideas is a leadership responsibility

- Sentia Digital supports this discipline through its AI Opportunity Assessment

Executive FAQ

How many AI pilots should we run at once?

One or two. Focus is more valuable than breadth, especially with limited talent.

How long should an AI pilot last?

Typically six to ten weeks. Long enough to validate value, short enough to force decisions.

Do we need an orchestration platform immediately?

Not always. But once a pilot succeeds, orchestration becomes critical for scaling reliably.

Ready to Prioritize Your AI Initiatives?

Move from ideas to pilots—and from pilots to operational value.

Explore AI Opportunity Assessment →