AI for HR: Ethical Use Is About Workflow Design, Not Models

How mid-market organizations can deploy AI in hiring and talent management safely—by focusing on process design, governance, and human accountability.

Executive Summary

HR leaders are under growing pressure to use AI to reduce recruiter workload, improve consistency, and manage increasing hiring volume—while avoiding bias, regulatory risk, and reputational damage.

The core insight of this article is simple but often misunderstood:

Ethical AI in HR is determined far more by workflow design and governance than by model choice.

When AI is embedded thoughtfully—as an assistive layer inside well-designed processes—it can improve fairness, speed, and consistency. When it is layered onto broken workflows or allowed to make unchecked decisions, it creates risk instead of value.

The Business Problem: HR Workflows Under Strain

Most mid-market HR teams are stretched thin. Recruiting volume is rising, expectations are higher, and tolerance for inconsistency is shrinking.

Common symptoms include:

- Resume overload and manual screening fatigue

- Inconsistent shortlisting across teams

- Slow time-to-hire

- Internal candidates being overlooked because skills are invisible

These challenges are rarely caused by technology gaps alone. They are workflow problems—rooted in fragmented processes, unclear decision ownership, and limited operational discipline.

Why This Problem Matters Operationally and Financially

Inefficient HR workflows have direct business consequences.

Operationally:

- Vacant roles slow execution and strain teams

- Recruiters spend time on low-value tasks instead of engaging candidates

- Managers lose confidence in hiring and promotion decisions

Financially:

- Higher cost per hire

- Longer vacancy costs

- Increased attrition

- Reputational risk when candidates perceive unfair or opaque processes

HR workflow quality directly affects cost, speed, and trust—not just HR efficiency.

Typical HR Workflows Involved

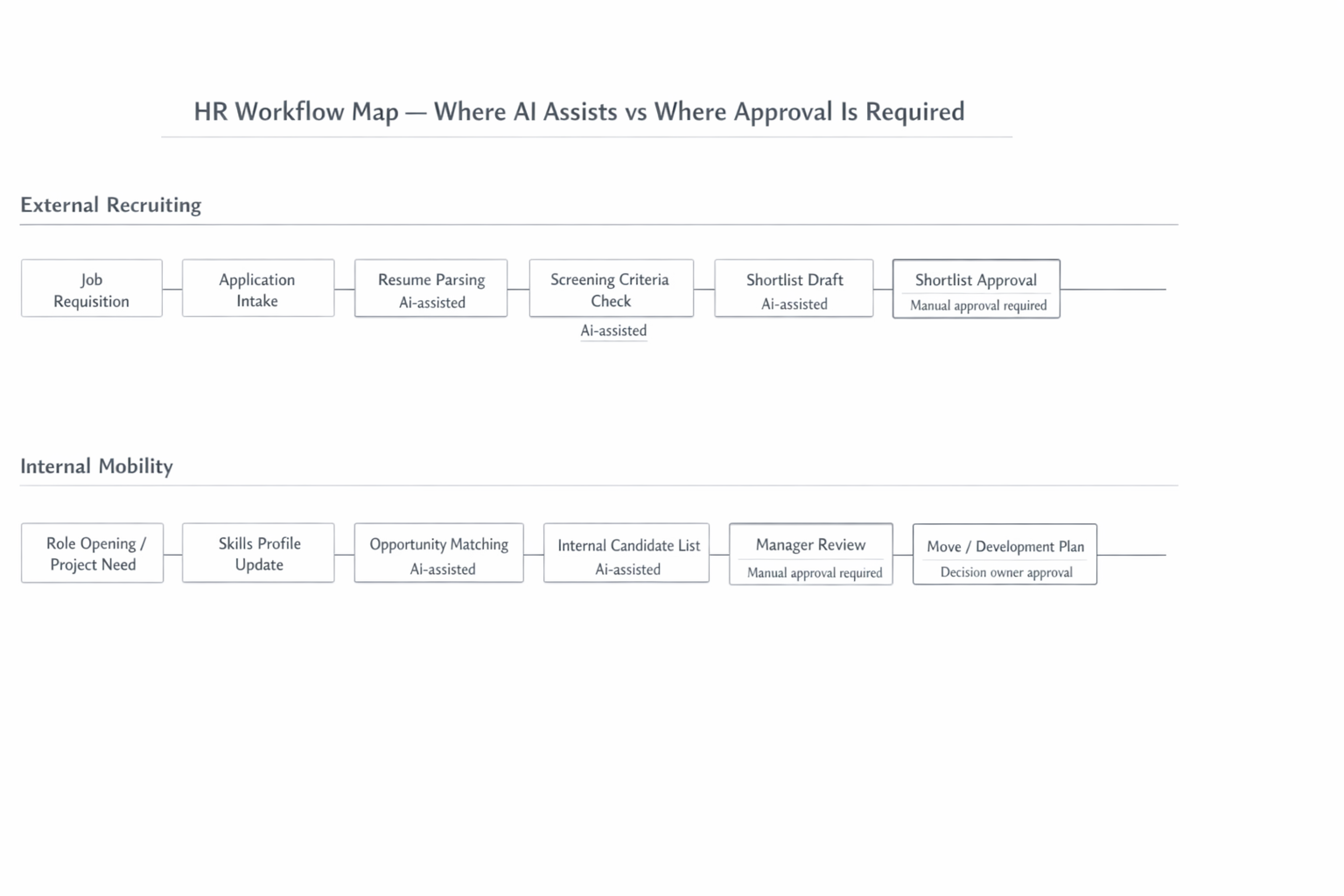

HR Workflow Map — Where AI Assists vs Where Humans Decide

External recruiting workflows typically include job intake, application parsing, screening, shortlisting, interviews, and final decisions.

Internal mobility workflows include skills visibility, opportunity matching, manager review, and movement or development planning.

Problems arise when:

- Screening criteria vary across recruiters

- Decisions lack documentation

- Escalation paths are missing

- Internal talent data is fragmented

These are the points where AI can help—if the workflow is clearly designed first.

Why Ethical AI Fails When Framed as a Model Problem

Many organizations ask whether a model is biased. The more important question is how the model is used inside the workflow.

Ethical failures usually stem from:

- Black-box tools embedded in ambiguous decision points

- AI outputs treated as final decisions

- Missing audit trails

- Unclear accountability

Even technically sound models can produce unethical outcomes if workflow design is weak. Ethics in HR is fundamentally an operational challenge.

Where AI Can Realistically Help

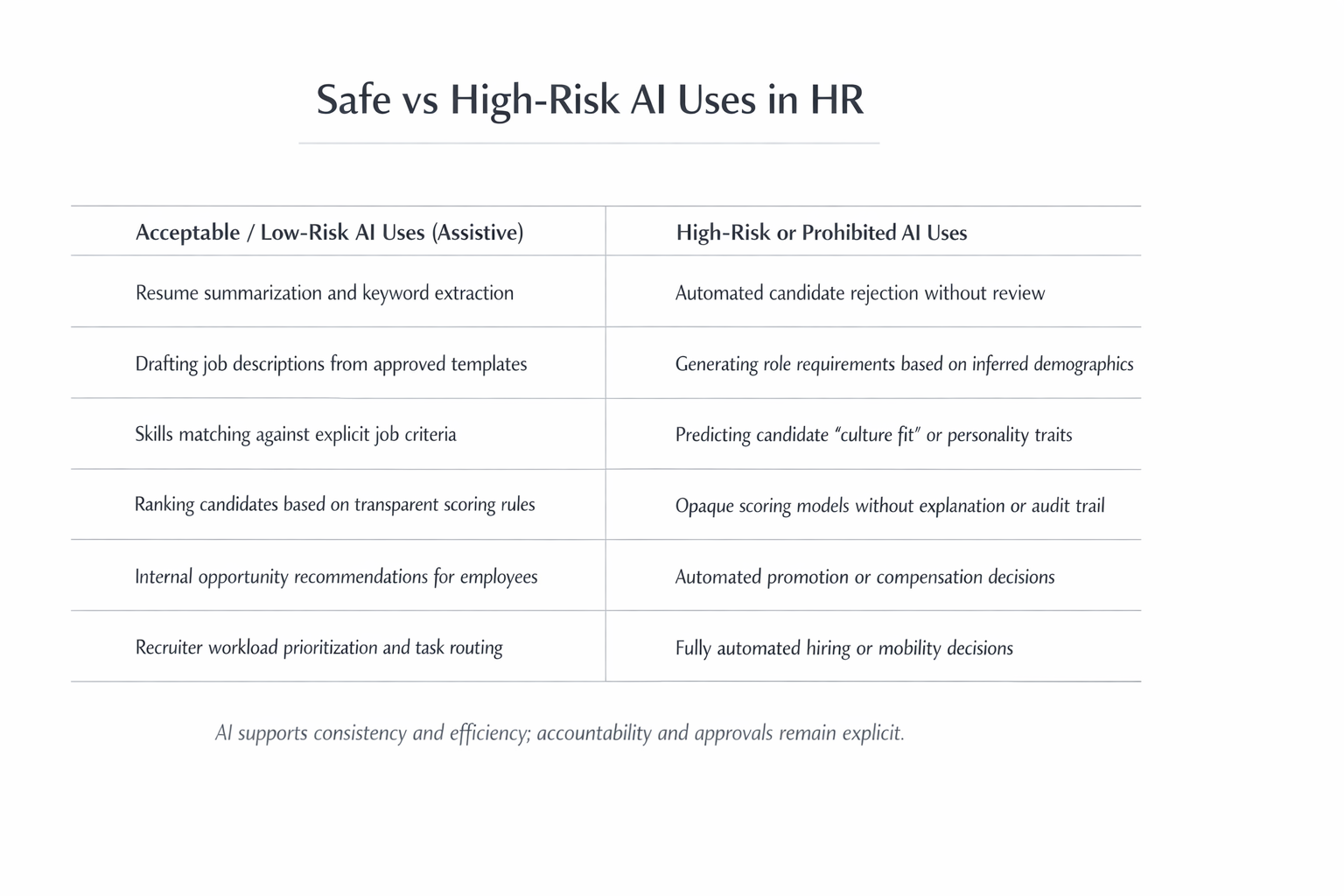

Safe vs High-Risk AI Uses in HR

AI delivers the most value when it is assistive, structured, and explainable.

Realistic use cases include:

- Resume parsing and data extraction

- Screening support based on job-relevant criteria

- Shortlist drafting for recruiter review

- Interview scheduling automation

- Interview summarization

- Skills-based internal matching

The goal is not automation for its own sake. It is decision support at scale that reduces workload and improves consistency without replacing accountability.

Bias and Transparency Are Workflow Design Problems

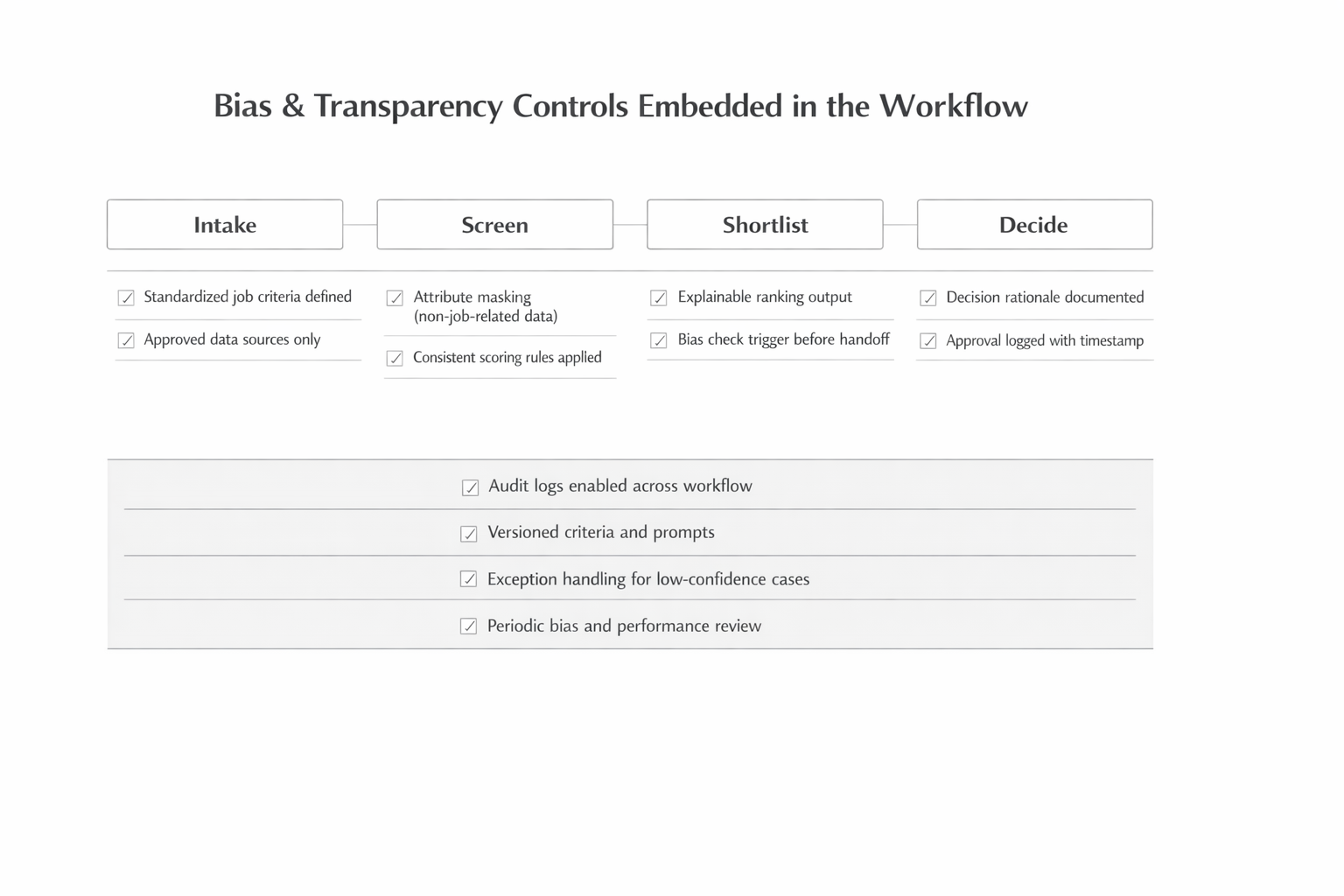

Bias & Transparency Controls Embedded in the Workflow

Bias accumulates when controls are missing:

- Over-collection of irrelevant data

- Opaque scoring

- Silent overrides

- Lack of monitoring

Ethical workflows embed controls at every stage:

- Job-relevant inputs only

- Explainable scoring

- Required human review

- Logged decisions

- Regular monitoring

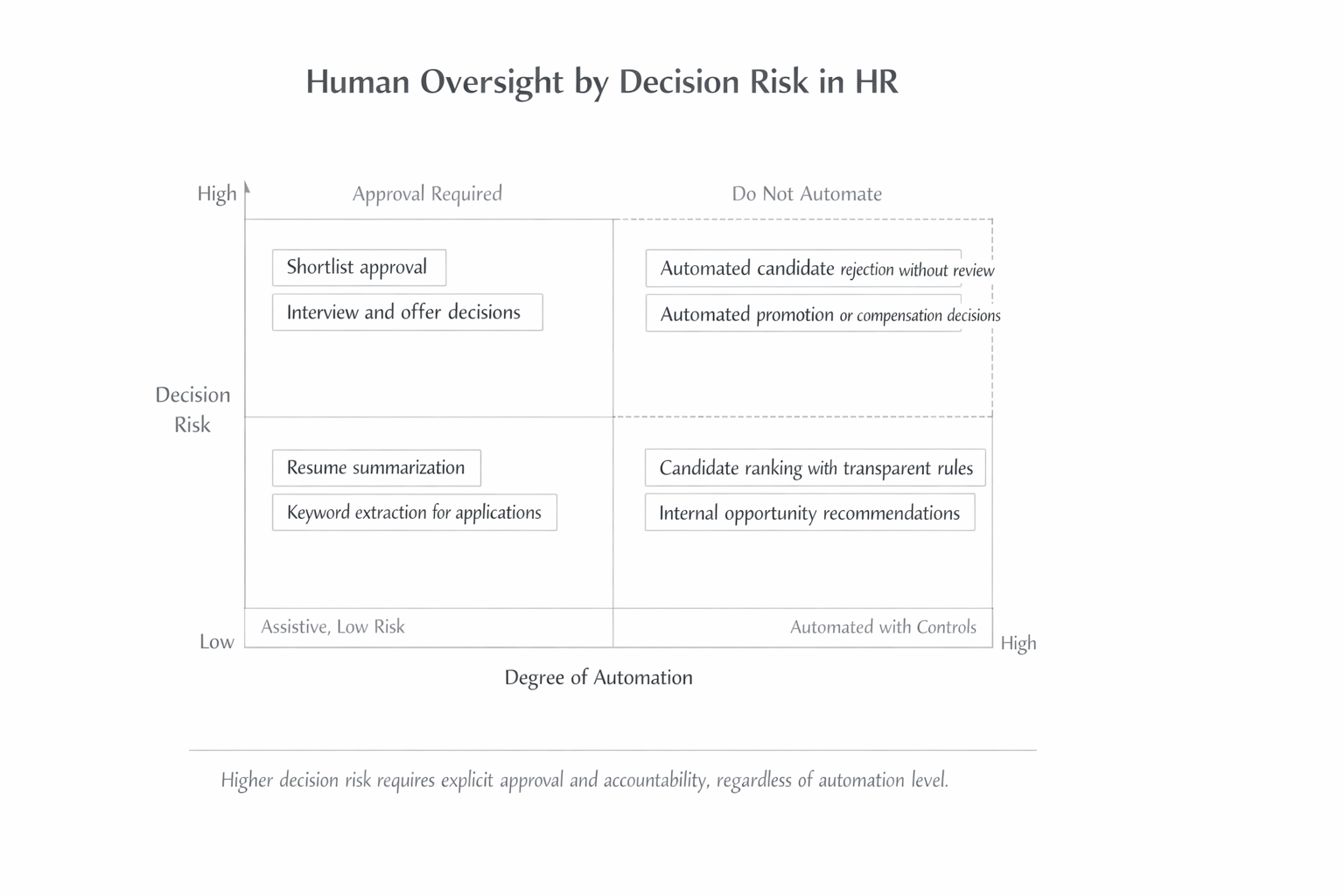

Human-in-the-Loop: Matching Oversight to Decision Risk

Human-in-the-Loop Oversight by Decision Risk

Low-risk tasks like scheduling and parsing can be heavily automated. High-risk decisions such as offers, promotions, or terminations should never be automated.

A practical rule applies:

AI may recommend, humans decide, and decisions must be documented.

Matching oversight to decision risk allows AI to scale without losing accountability.

Examples from Slow-Adoption Industries

Even conservative industries are adopting AI carefully:

Healthcare

Uses AI-assisted screening and scheduling to address staffing shortages while maintaining compliance with credentialing requirements.

Manufacturing

Applies skills-based screening to widen talent pools and reduce time-to-fill for specialized roles.

Financial Services

Deploys internal talent marketplaces to surface hidden skills and reduce external hiring costs.

Across industries, successful adoption follows the same pattern: start with low-risk workflows, keep humans accountable, and expand only after proving value.

Implementation Guardrails for Mid-Market Organizations

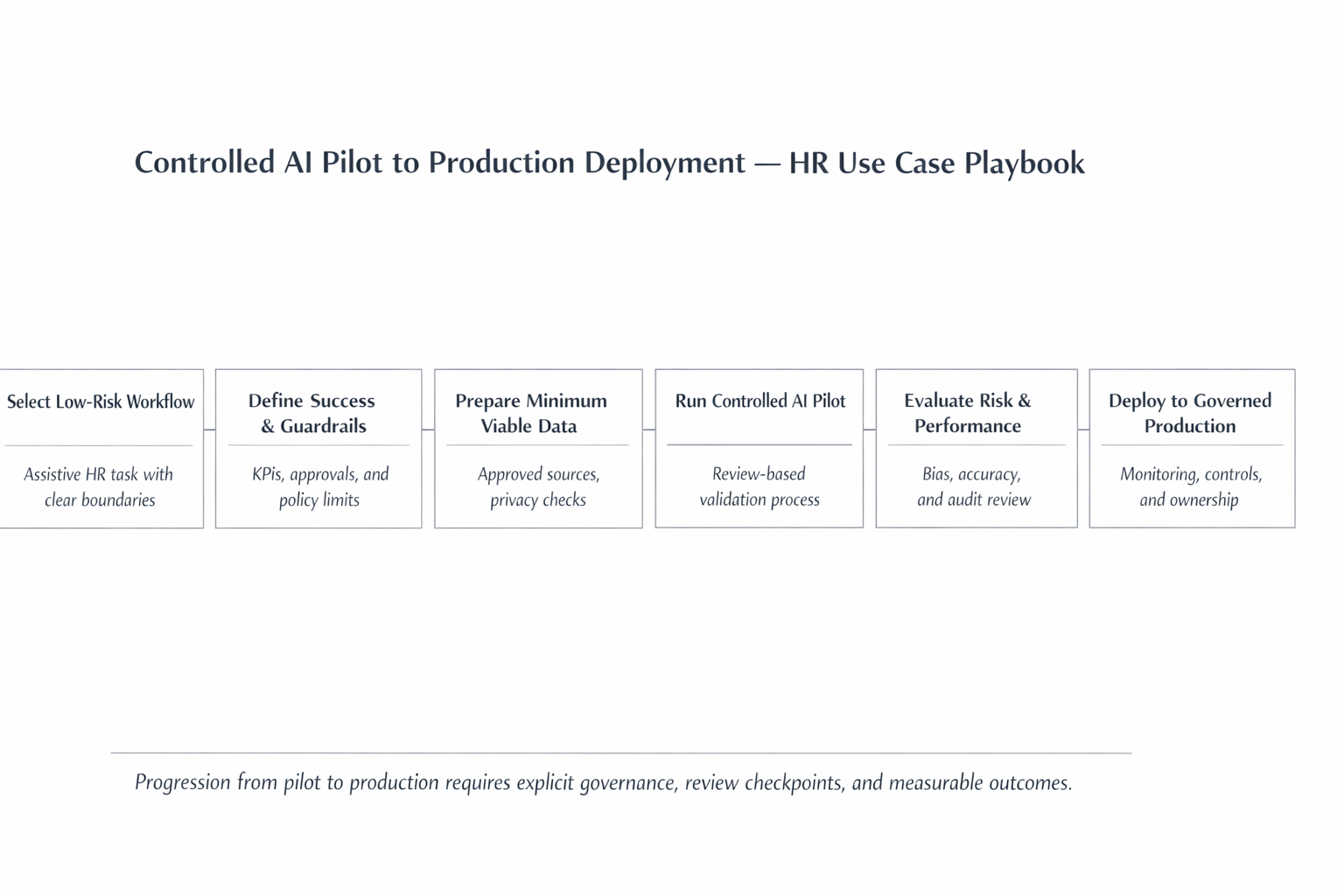

Controlled AI Pilot to Production Deployment — HR Use Case Playbook

Successful teams follow a disciplined path:

Select one low-risk workflow

Choose a process like resume screening or interview scheduling.

Define success metrics

Set clear KPIs such as time saved, consistency improvement, or recruiter satisfaction.

Prepare minimum viable data

Extract data from your ATS and HRIS—perfection is not required.

Design guardrails

Define what AI can recommend vs what requires human approval.

Run a controlled pilot

Test with a small team, measure outcomes, and gather feedback.

Scale with governance

Expand systematically with monitoring, audit trails, and clear ownership.

This approach avoids stalled pilots and unmanaged risk.

Key Risks and Constraints Leaders Must Address

Leaders must explicitly plan for:

- Regulatory exposure — Laws like NYC Local Law 144 and EU AI Act impose new requirements on automated hiring tools

- Data privacy and consent — Candidate data must be handled with clear consent and retention policies

- Vendor opacity — Many AI vendors resist transparency about how their models work

- Model drift — AI performance can degrade over time without monitoring

- Recruiter trust — Teams may resist tools they don’t understand or trust

- Change management — New workflows require training and ongoing support

Ignoring these constraints slows adoption and increases long-term risk.

Key Takeaways for Business Leaders

- Ethical AI outcomes are designed, not purchased

- Workflow clarity matters more than model sophistication

- AI should reduce workload and improve consistency—not replace accountability

- Start small, govern early, and scale deliberately

- Human-in-the-loop oversight must match decision risk

A Practical Path Forward

Most HR AI failures are not caused by bad technology. They stem from unclear workflows, weak governance, and misplaced expectations.

Mid-market leaders who treat AI as an operational capability—rather than a shortcut—are far more likely to see sustainable results.

Ready to Deploy AI in HR Responsibly?

Sentia Digital helps HR and operations leaders identify high-value AI opportunities, design ethical workflows, and build pilots that scale safely.

Start an AI Opportunity Assessment →