AI Success Is an Organizational Pattern, Not a Technology Choice

Why mid-market leaders should focus less on tools and more on how their organization actually operates.

Executive Summary

AI adoption is accelerating across mid-market organizations, yet most initiatives fail to reach production or deliver sustained ROI. Research consistently shows that the primary causes are organizational and operational, not technical. Successful companies treat AI as an operational capability, embedding it into workflows with clear ownership, metrics, and governance.

The Operational Problem: Why Do So Many AI Initiatives Stall?

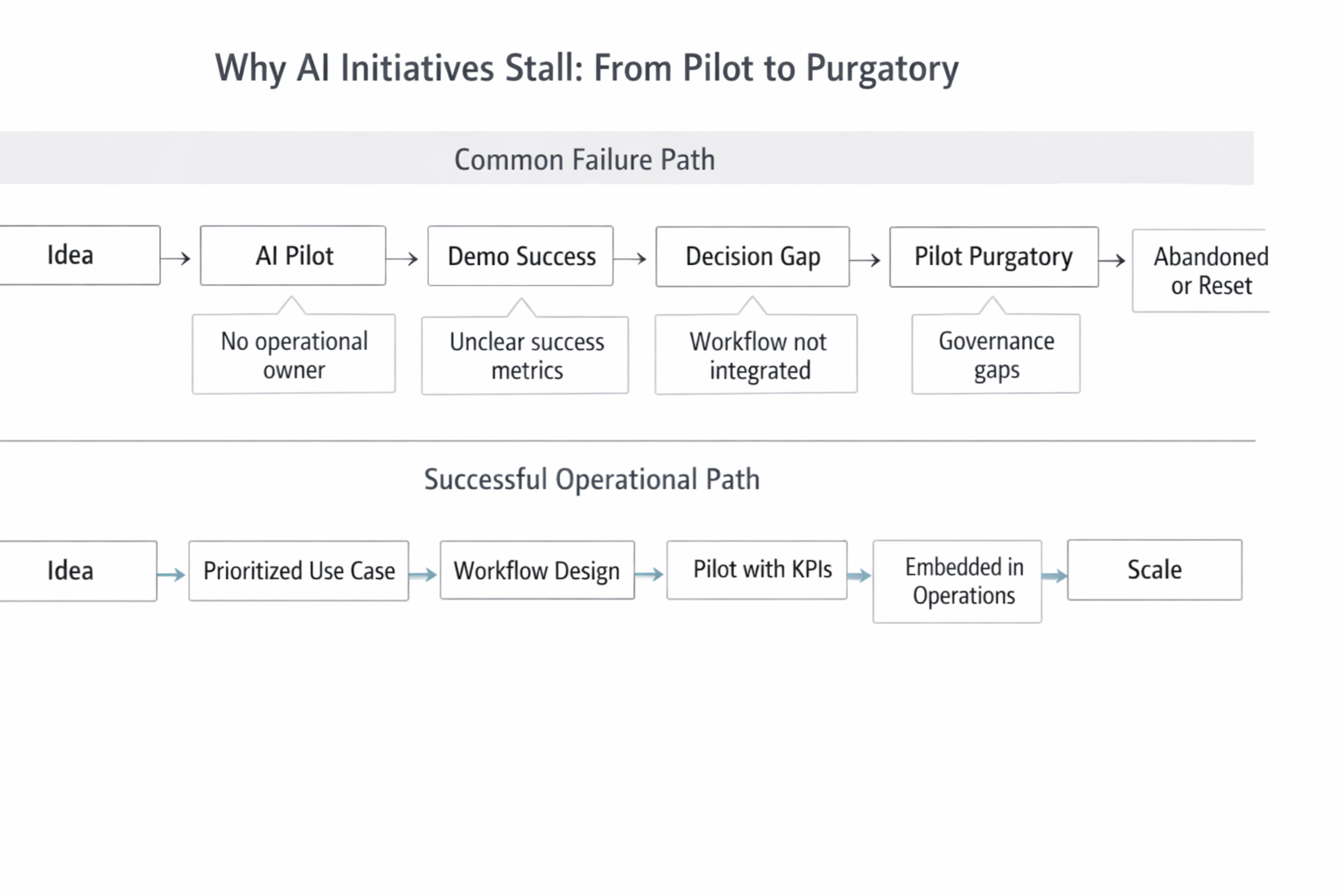

Many mid-market leaders recognize the pattern immediately. An AI idea is approved. A pilot is launched. The demo looks promising. Then momentum fades.

Budgets are redirected. Ownership becomes unclear. The pilot never scales, and the organization quietly moves on to the next initiative.

This pattern is widespread. An MIT Sloan Management Review analysis found that the vast majority of AI pilots never deliver measurable business value, largely due to organizational and operational gaps rather than model performance.

While over 80%+ of mid-market firms report using generative AI tools, fewer than one in four have integrated AI meaningfully into core operations.

The result is what many executives now describe as pilot purgatory: AI exists in demos, side tools, or isolated departments, but not where real work happens.

Why AI Initiatives Stall: From Pilot to Purgatory

Definitions: What We Mean by “Operational AI” and Related Terms

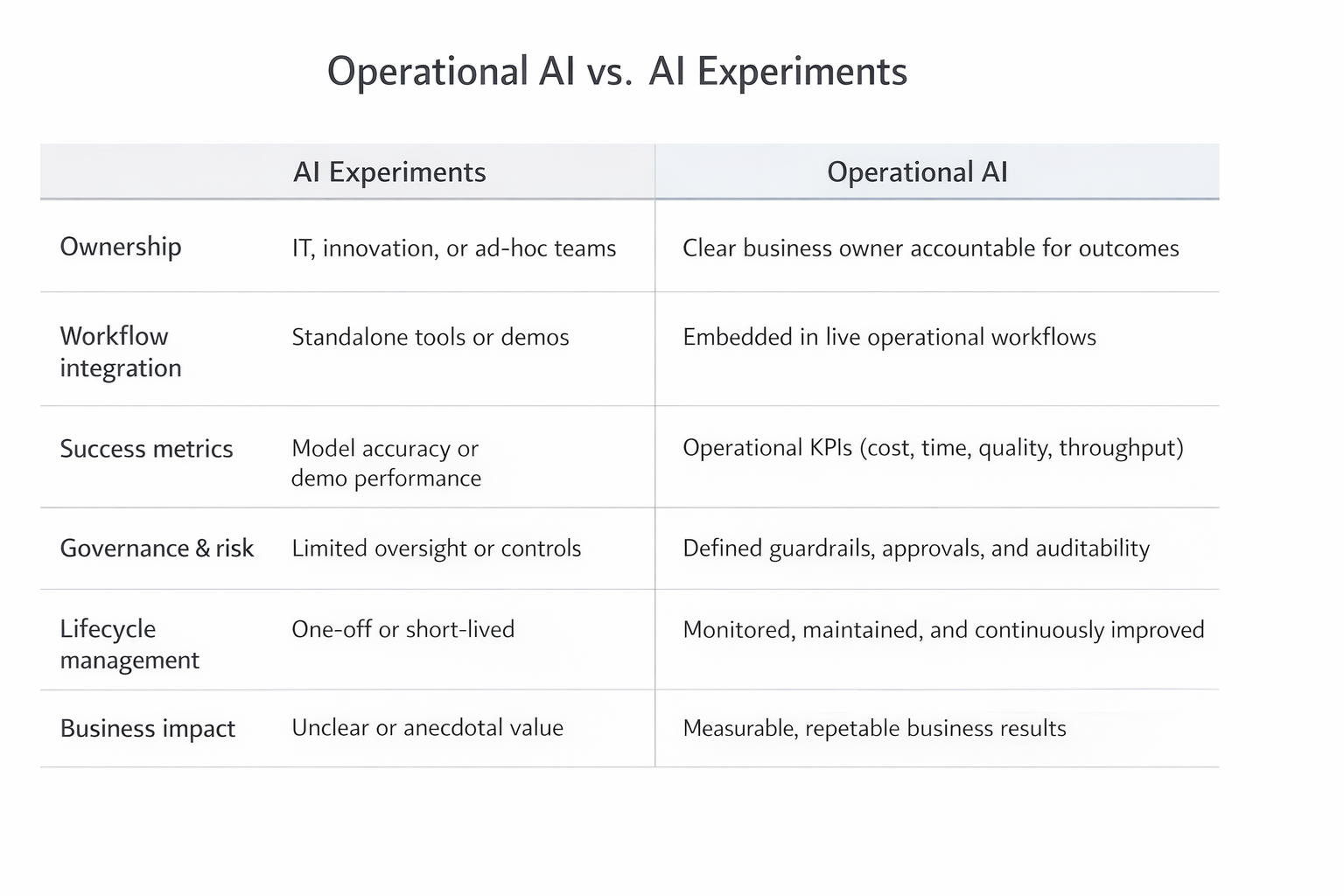

Before going further, it is important to align on language. Much confusion around AI stems from inconsistent terminology.

Operational AI — AI systems deployed into live business workflows, integrated with systems of record, governed, monitored, and measured for ongoing performance.

AI Opportunity — A specific, well-scoped business problem where AI can realistically improve speed, cost, accuracy, or decision quality.

AI Pilot — A controlled, time-bound implementation designed to validate feasibility, impact, and operational fit.

Production Deployment — The point at which an AI solution becomes part of day-to-day operations, with clear ownership, budget, monitoring, and support.

Feasibility — A combined assessment of data availability, workflow clarity, integration effort, governance requirements, and organizational readiness.

Orchestration Platform — The technical and operational layer that connects AI models, enterprise data, business rules, human approvals, and monitoring into a reliable system.

These distinctions matter. Many organizations believe they are “doing AI” when they are actually running disconnected experiments.

Why This Matters to Business and Operations Leaders

For COOs, CFOs, and functional leaders, stalled AI initiatives are not just an innovation issue. They are an operational and financial risk.

Efficiency and productivity

AI can drive meaningful productivity gains, but only when embedded into real workflows. McKinsey reports that organizations that successfully operationalize AI achieve 20–30% productivity improvements in targeted processes, while those stuck in pilot mode see little impact.

Cost and ROI

Failed pilots represent sunk cost without compounding return.

Customer experience

Disjointed AI creates fragmented experiences. A chatbot that operates outside core systems or staff workflows often frustrates customers rather than helping them. Operational AI improves consistency, response time, and service quality.

Risk and compliance

Ungoverned AI introduces real risk. Regulators increasingly expect traceability, auditability, and human oversight. Poorly governed AI can increase compliance exposure instead of reducing it.

Organizational credibility

Repeated AI failures erode trust. Teams become skeptical of future initiatives, and leadership loses confidence in digital transformation efforts.

How AI Applies in Practice (Without the Hype)

AI creates value when it is applied to the right types of problems.

In practice, AI works best when it handles high-volume, repeatable tasks, augments human decision-making, and operates within clear business rules. It struggles when asked to replace judgment, strategy, or poorly defined processes.

Common practical applications include:

- Document and data extraction (invoices, contracts, forms)

- Forecasting demand, risk, or workload patterns

- Anomaly detection in transactions or operations

- Workflow automation with human-in-the-loop controls

- Decision support that ranks or prioritizes options

RSM reports that nearly half of mid-market firms see the most value from AI in document processing, forecasting, and workflow automation—not fully autonomous systems.

The takeaway is simple: AI should fit how work actually happens, not how a vendor demo suggests it could happen.

Operational AI vs. AI Experiments

Realistic Examples from Non-Tech Industries

Logistics & Field Operations

Healthcare & Financial Services

Hospitality, Education & Manufacturing

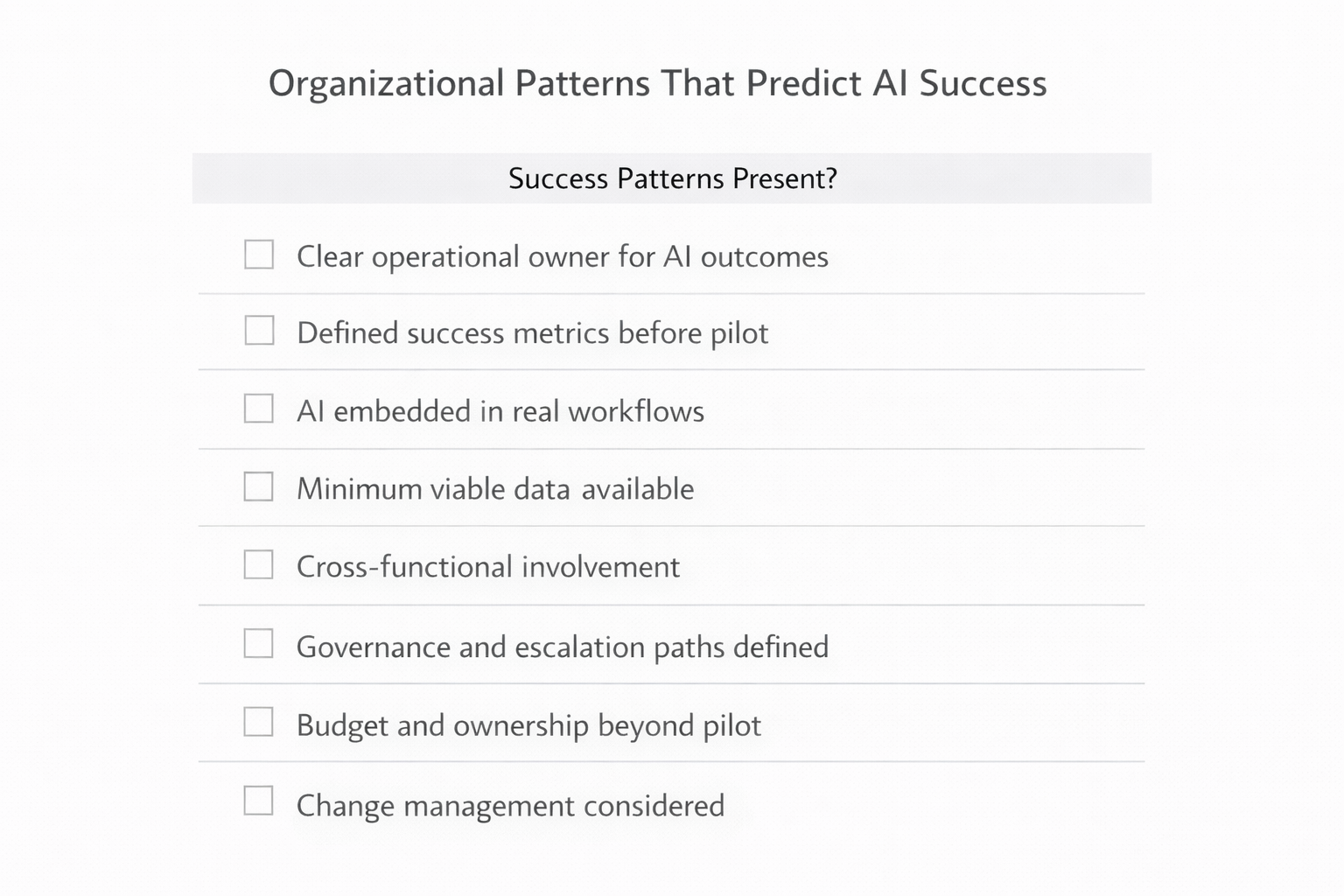

Organizational Patterns That Predict AI Success

Organizational Patterns That Predict AI Success

Across industries, successful AI initiatives consistently show the same patterns:

- Clear operational ownership, not just IT sponsorship

- Defined success metrics before the pilot begins

- Minimum viable data readiness, not perfect data

- Early governance and escalation paths

- Cross-functional involvement from day one

- Willingness to redesign workflows, not just automate steps

These patterns are usually visible before any model is built.

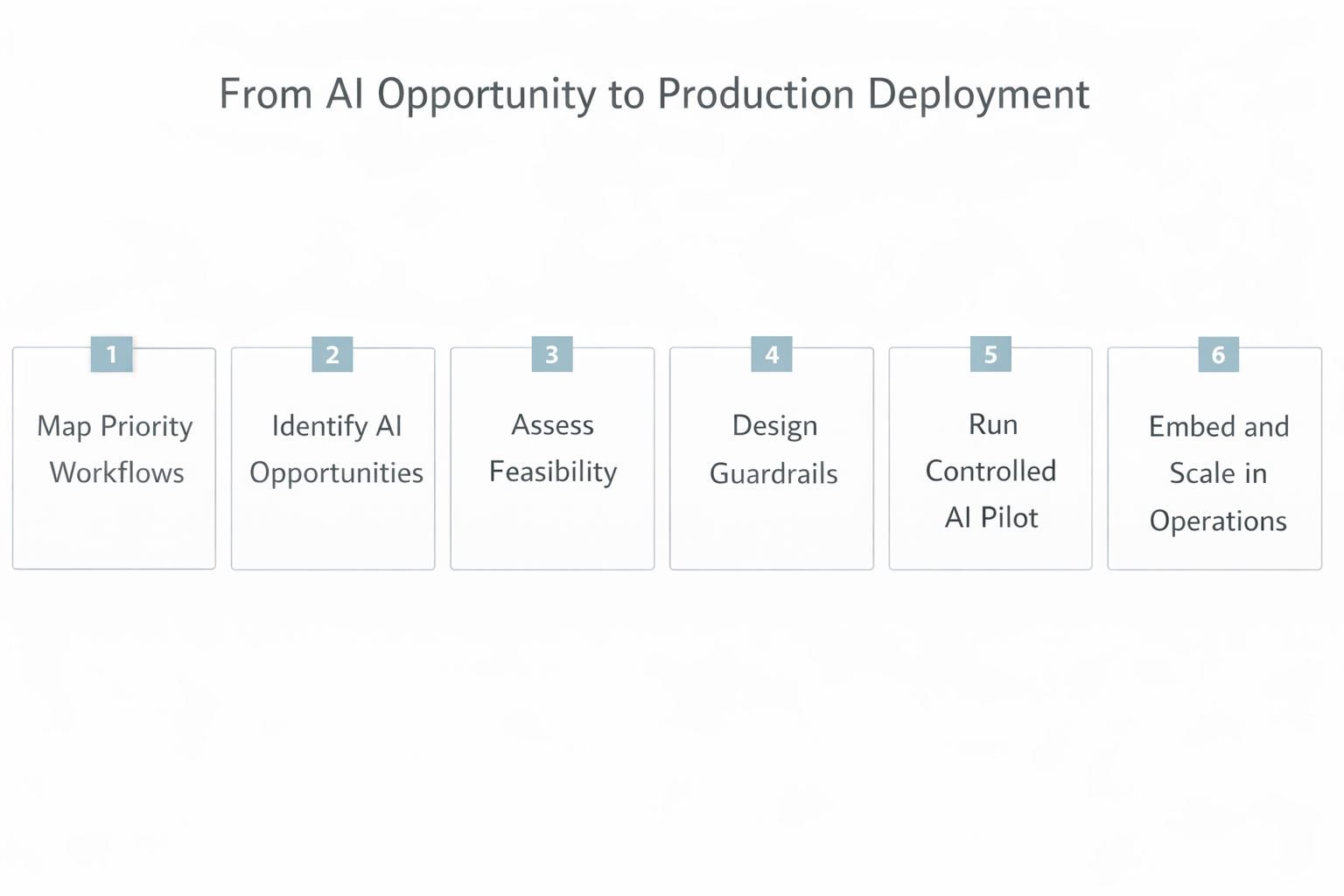

Recommended Implementation Steps for Leaders

From AI Opportunity to Production Deployment

Leaders do not need a complex transformation roadmap to get started. A practical, repeatable sequence works best:

Map priority workflows

Identify operational pain points where time, errors, or costs concentrate.

Identify realistic AI opportunities

Tie opportunities directly to those workflows and business outcomes.

Assess feasibility

Evaluate data availability, workflow clarity, and governance requirements.

Design guardrails

Define success metrics, escalation paths, and human oversight thresholds.

Run a controlled AI pilot

Test with production intent—not just technical accuracy.

Integrate and scale gradually

Connect to systems of record and expand based on proven results.

Organizations that follow this sequence are far more likely to reach production deployment within 90–120 days.

Common Pitfalls and How to Avoid Them

Treating AI as a tool purchase — Avoid it by starting with a business problem, not a vendor.

Running pilots without a production path — Avoid it by designing pilots with integration, ownership, and budget in mind.

Ignoring data and workflow reality — Avoid it by validating data availability and process clarity early.

Underestimating change management — Avoid it by involving users, explaining intent, and designing escalation paths.

Over-automating too early — Avoid it by using AI to assist before it decides autonomously.

When This Approach May Not Be Appropriate

AI is not always the right starting point. This approach may not be appropriate when:

- Processes are undefined or constantly changing

- Data is sparse, unreliable, or inaccessible

- Leadership alignment is weak or fragmented

In these cases, process improvement, data cleanup, or organizational alignment should come first. AI applied too early often amplifies dysfunction rather than fixing it.

Executive FAQ

How long should an AI pilot take?

Most effective pilots run 6–10 weeks and are designed to test operational fit, not perfection.

Do we need perfect data before starting?

No. You need sufficient data to test feasibility and value. Waiting for perfect data often delays progress indefinitely.

Who should own AI initiatives?

Ownership should sit with the business function responsible for outcomes, with IT and data teams as partners.

Key Takeaways for Business Leaders

- AI success is driven by organizational patterns, not technology choices

- Most AI failures occur during the transition from pilot to operations

- Operational AI requires ownership, workflow integration, and governance

- Feasibility matters as much as technical capability

- Starting with the right problems improves ROI more than starting with tools

Ready to Assess Your AI Readiness?

Sentia Digital helps mid-market organizations assess AI readiness and identify high-value opportunities designed to reduce risk before significant investment.

Start the Conversation →