Operational AI vs Experiments: What Executives Should Really Aim For

Most AI initiatives stall because leaders treat them as isolated projects rather than integrated systems that operate inside the business.

Artificial intelligence is now on the agenda of nearly every mid-market leadership team. Yet many organizations struggle to convert that attention into measurable results. The reason is rarely the technology itself. It is how AI is framed, governed, and deployed.

Too often, companies run AI experiments that look promising in a demo but never change how work actually gets done. What creates durable value instead is Operational AI—AI that is embedded into workflows, owned by the business, and measured against real outcomes.

This article explains the difference and what executives should aim for.

1. What experiments are

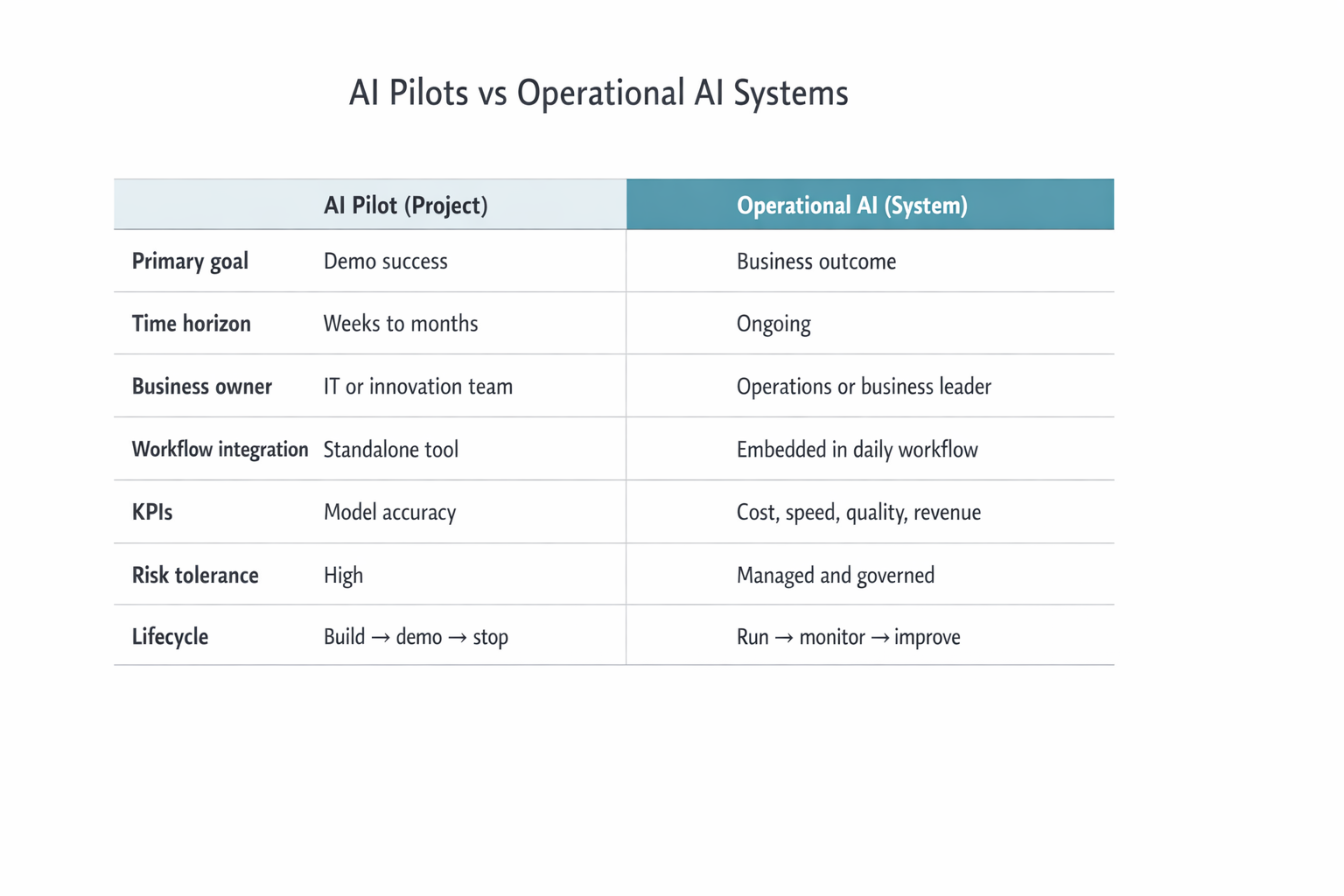

AI Pilots vs Operational AI Systems

Most organizations begin with AI pilots. These are short-term, limited-scope efforts designed to answer a narrow question: Can this model do something useful?

They are often run by IT, data science, or innovation teams and focus on proving that a piece of technology works. Typical pilot outputs include a working chatbot, a predictive model, a classification or recommendation engine, or a proof-of-concept dashboard.

Pilots are valuable for learning. But they are not designed to change how the business operates. They usually run outside core workflows, have no clear business owner, are judged by technical success rather than business impact, and end when the demo is finished.

As a result, even strong pilots often go nowhere.

2. What Operational AI is

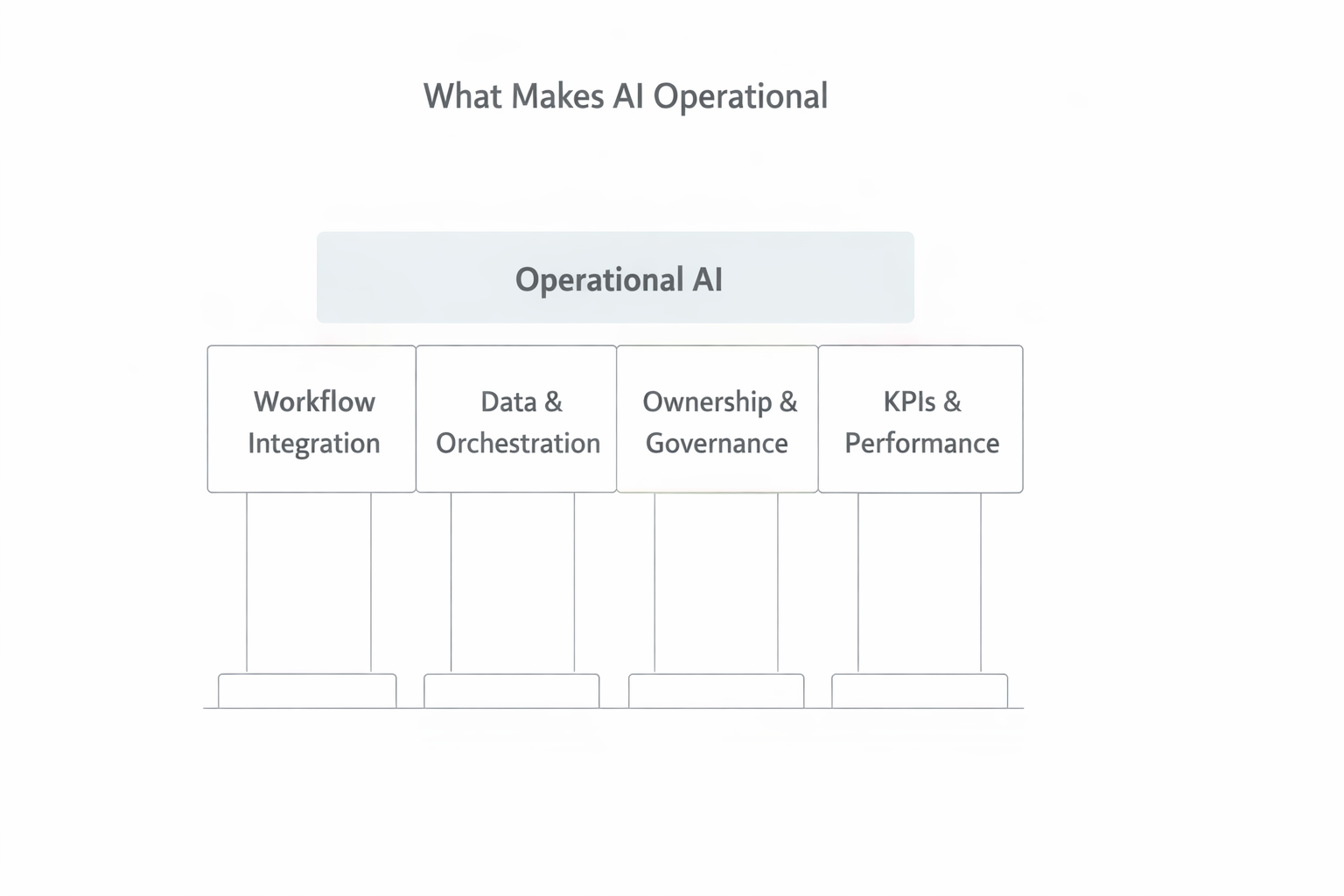

What Makes AI Operational

Operational AI is fundamentally different. It is not just a model or tool. It is a business system that uses AI to support real decisions and actions, every day, inside live workflows.

In practical terms, Operational AI has four defining characteristics:

1. Workflow integration

AI outputs appear inside the tools and processes people already use. They do not live in a separate app or dashboard.

2. Data and orchestration

Data flows reliably into the system. An orchestration platform moves information between systems, runs the models, and delivers outputs at the right moment.

3. Ownership and governance

A business leader owns the outcomes. Clear rules exist for risk, bias, security, and compliance.

4. KPIs and monitoring

Performance is tracked using business metrics like cycle time, cost, error rates, and customer satisfaction—not just model accuracy.

When all four are present, AI becomes part of how the business runs, not something bolted on.

3. Key differences: integration, governance, ownership

This is where most initiatives break down.

AI pilots are built around project logic: finish the build, show the demo, declare success.

Operational AI is built around system logic: embed in workflows, assign accountability, measure outcomes, manage risk, improve continuously.

The same model can produce radically different results depending on which logic is used.

Without integration, people ignore it. Without governance, leaders do not trust it. Without ownership, no one pushes it into production.

That is why pilots that look impressive often fail to deliver.

4. Why pilots fail

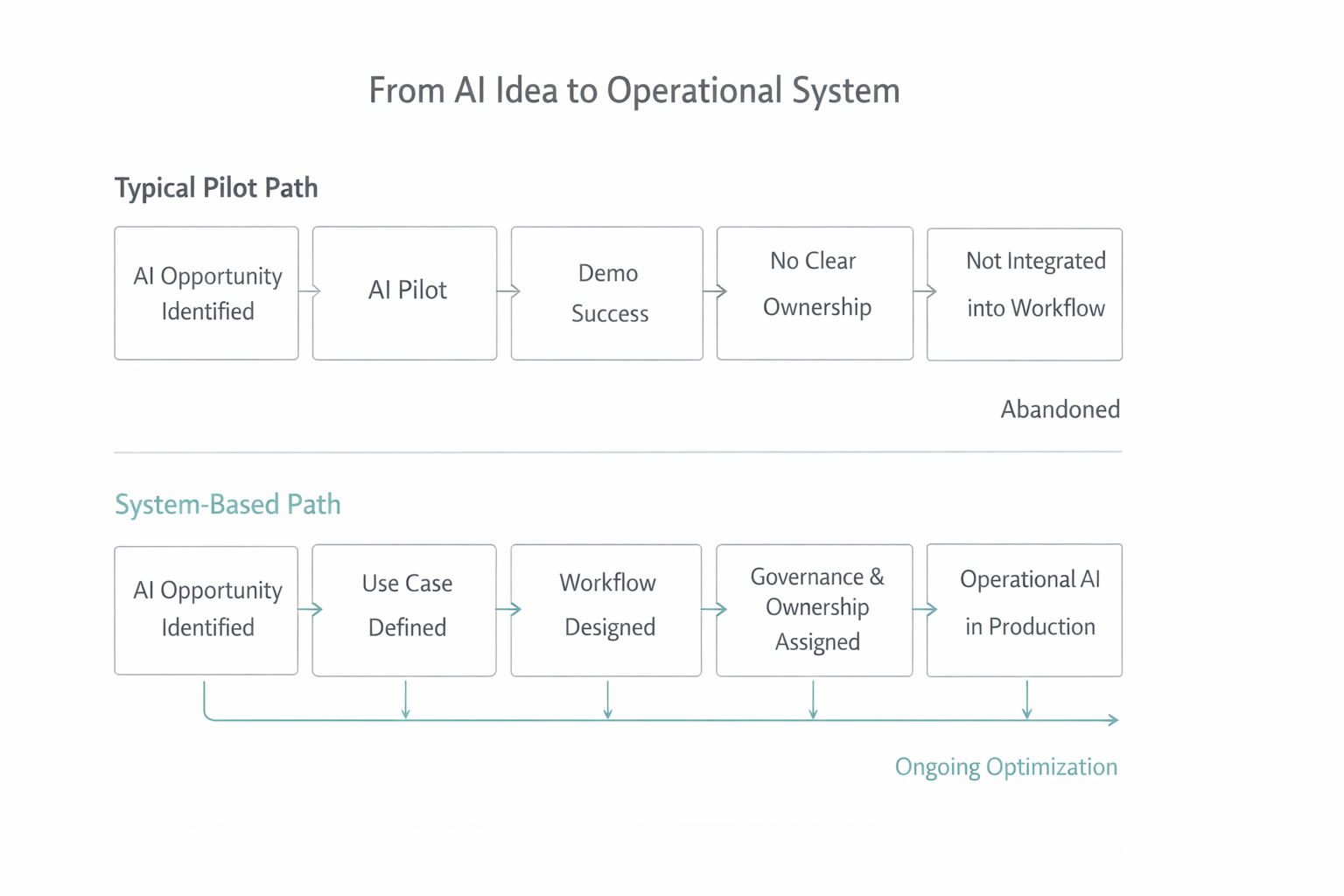

From AI Idea to Operational System

The reasons are rarely technical. The most common breakdowns are:

No business outcome defined

Teams build something, but no one agrees what success means.

No workflow design

The AI produces output, but no one knows where or how it should be used.

No governance

Risk, bias, and compliance are left unaddressed.

No ownership

There is no executive accountable for adoption and results.

These gaps trap organizations in what many call pilot purgatory. The technology works. The business never changes.

5. Example comparison

Consider a mid-market logistics company experimenting with AI-driven route planning.

✕ The Pilot

A data science team builds a model that suggests more efficient delivery routes. In tests, it outperforms manual planning.

But dispatchers do not use it. The tool lives in a separate dashboard. It does not integrate with their scheduling system. No one owns the decision to change how routes are assigned.

The pilot is quietly abandoned.

✓ The System

The same company revisits the use case. This time:

- Route recommendations appear inside the dispatch tool

- A head of operations owns on-time delivery and fuel cost metrics

- Performance is tracked weekly

- Dispatchers can give feedback when routes fail

The AI becomes part of the operation. The model improves. Results compound.

The technology did not change. The system did.

6. Where AI can realistically help

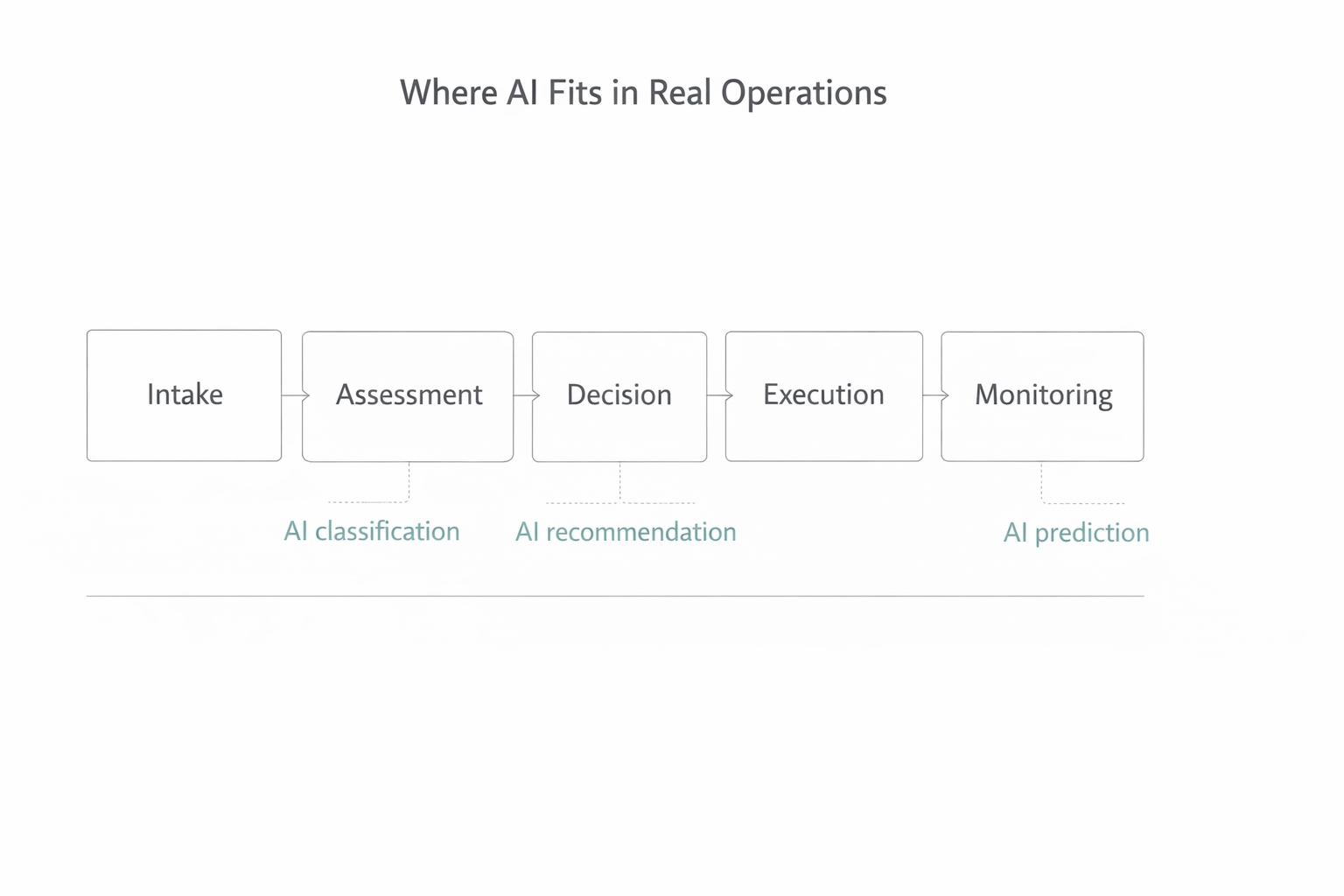

Where AI Fits in Real Operations

AI delivers the most value when it supports existing workflows, not when it tries to replace them. The strongest use cases fall into three categories:

1. Triage and classification

AI sorts and prioritizes work—support tickets, insurance claims, loan applications, maintenance requests. This ensures people spend time on the right things first.

2. Recommendation and decision support

AI provides guidance at the moment a decision is made: which customer to contact, which invoice to review, which machine to service, which lead to pursue. Humans still decide. AI simply improves speed and consistency.

3. Forecasting and planning

AI predicts what is likely to happen: demand, staffing needs, equipment failures, customer churn. These insights help managers act earlier and with better information.

None of this is hype. It is operational support.

7. Typical workflows impacted

Across operations, finance, HR, and customer service, AI fits into a common pattern:

- Intake — Data arrives

- Assessment — AI analyzes and scores

- Decision — A person reviews AI output

- Execution — Work is completed

- Monitoring — Results feed back into the system

If AI is not embedded in these steps, it becomes extra work. When it is embedded, it becomes leverage.

8. How executives should guide teams

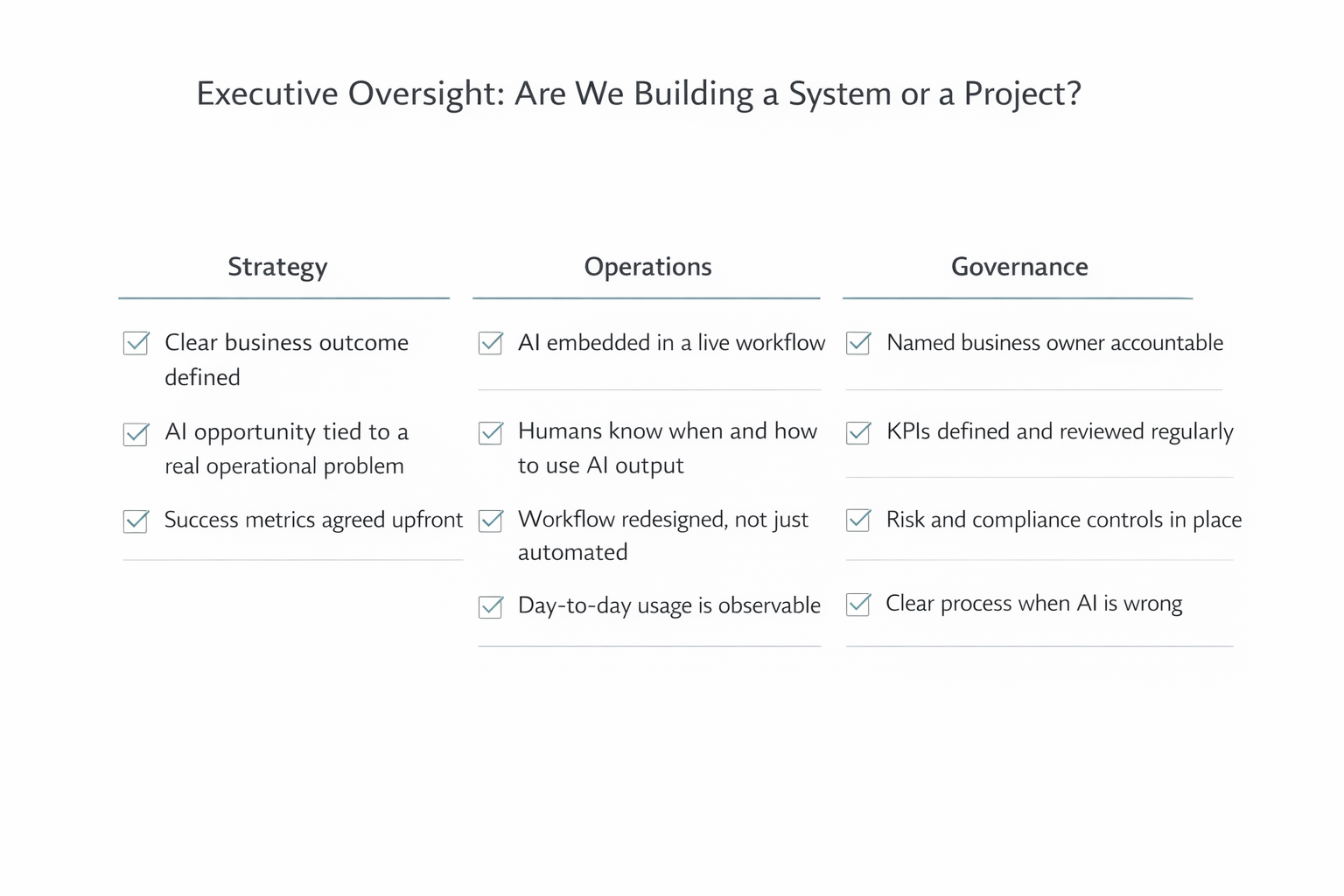

Executive Oversight – Are We Building a System or a Project?

Executives do not need to manage models. They need to manage systems. The most effective leaders ask simple, disciplined questions:

Strategy

What business outcome are we targeting? How will we measure success?

Operations

Where does the AI show up in the workflow? Who uses it, and when?

Governance

Who owns risk and compliance? What happens when the AI is wrong?

Ownership

Which executive is accountable for results?

These questions prevent pilots from drifting into irrelevance.

Executive FAQ

Is it still worth running AI pilots?

Yes. Pilots reduce technical risk. But they must be designed with a clear path to production.

How long before we see results?

Operational AI creates value over time. Expect steady improvement, not instant transformation.

What if we lack AI expertise?

Most companies succeed by using platforms and partners that handle orchestration and monitoring, not by building everything themselves.

Key Takeaways for Business Leaders

- AI creates value only when it becomes part of the operating system

- Most pilots fail because they are not integrated, governed, or owned

- Operational AI supports real workflows, not abstract experiments

- Executive oversight determines whether AI scales or stalls

- Start with business outcomes, then design the system around them

Ready to Move from Pilots to Operational AI?

Sentia Digital helps mid-market leaders build AI systems that deliver measurable results.

Learn How We Can Help →