Why AI Initiatives Stall in Operations and How to Design for Scale

Pilot purgatory is a design failure, not a technology failure. Most AI initiatives fail not because the technology is immature, but because they are never designed to operate inside real business workflows.

Executive Summary

Mid-market organizations are launching AI pilots faster than ever, yet a large share never become production systems that meaningfully change how work gets done.

Research from Gartner and RAND shows that many AI initiatives stall or are abandoned after proof of concept—not because models fail, but because of weak data readiness, unclear ownership, poor integration, and deferred governance.

This article explains why pilots stall, why that failure matters operationally and financially, and how leaders can design AI pilots that are production-ready from the start.

What Do We Mean by “Production-Ready”?

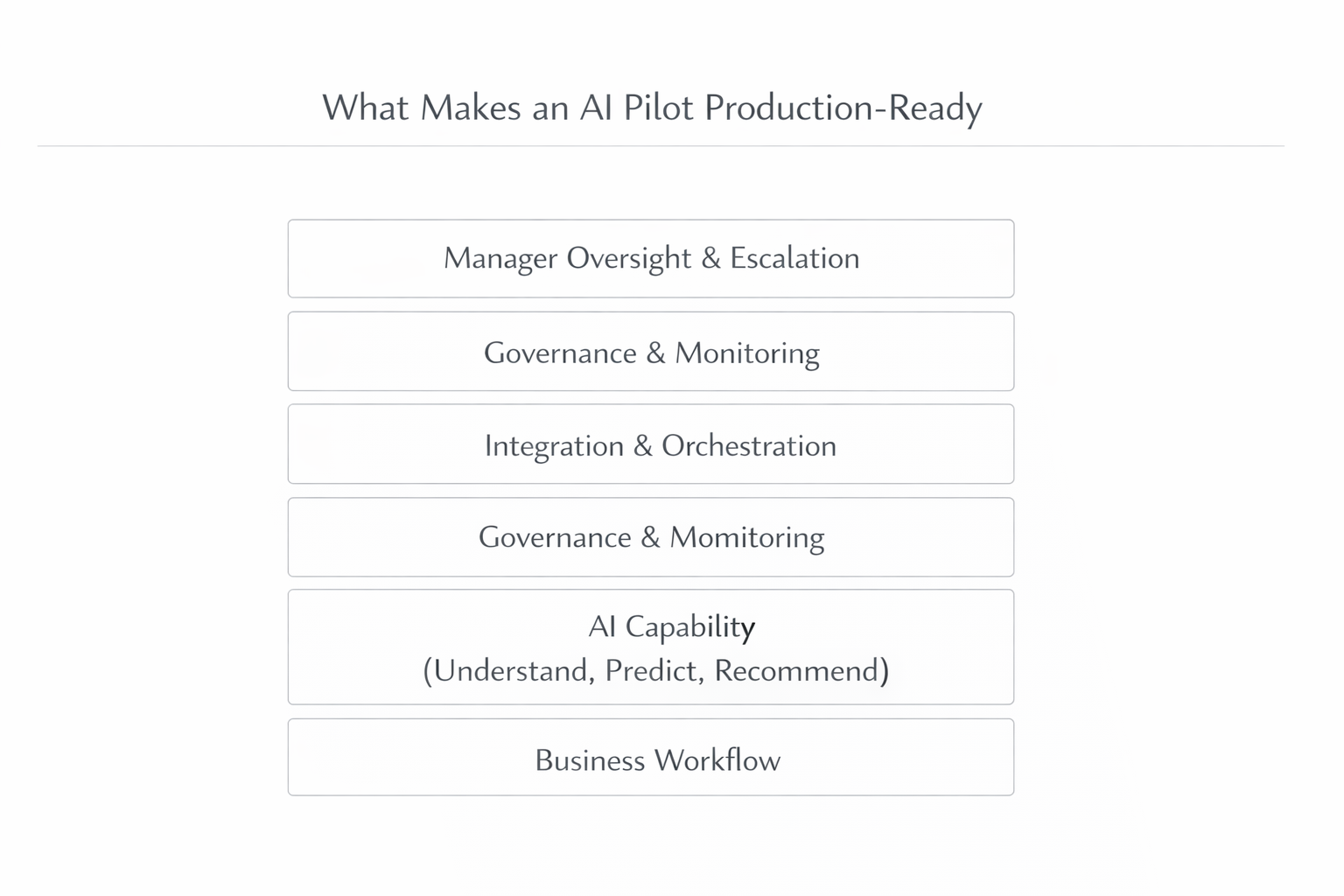

What Makes an AI Pilot Production-Ready

Before diagnosing why AI pilots fail, it is important to clarify terminology. Many stalled initiatives suffer from misaligned expectations rather than poor technology.

- Operational AI — AI embedded directly into day-to-day workflows, where its outputs drive real actions. It includes ownership, system integration, and monitoring.

- AI opportunity — A clearly defined operational problem where AI can improve speed, cost, quality, or risk outcomes.

- AI pilot — A time-boxed implementation intended to test an AI opportunity under real operational conditions, including real users, data quality issues, and exceptions.

- Production deployment — The AI capability is running inside live workflows, integrated with systems of record, governed, monitored, and owned by the business.

- Feasibility — Goes beyond technical possibility. It includes data readiness, workflow fit, integration effort, risk controls, and the organization’s ability to adopt change.

- Orchestration — The connective layer that routes AI outputs into workflows, manages approvals, handles exceptions, logs decisions, and supports monitoring.

These definitions set the foundation for understanding why pilot purgatory is fundamentally a design issue.

What Is the Real Operational Problem Behind Pilot Purgatory?

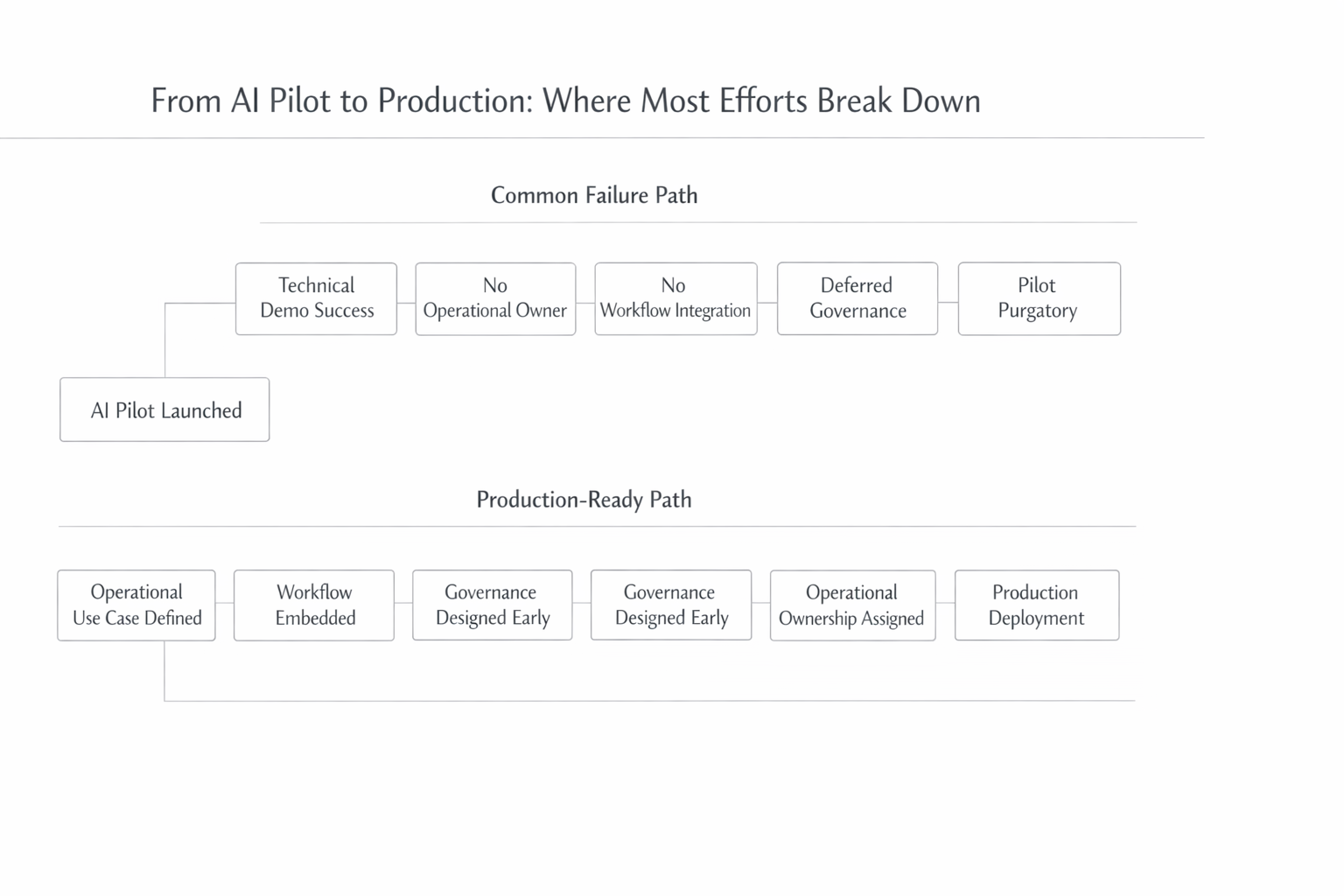

From AI Pilot to Production—Where Most Efforts Break Down

Most mid-market companies can demonstrate AI capability in a controlled setting. The failure occurs when pilots meet the complexity of real operations.

A common sequence looks like this: A pilot is launched by IT, analytics, or an innovation group. The model performs well in a demo. Leaders are optimistic. Then the pilot reaches the point where it must integrate into daily workflows. At that stage, ownership becomes unclear, integration work expands, and exceptions appear. Without clear accountability and design discipline, momentum fades. The pilot remains technically “alive” but operationally irrelevant.

Common root causes in mid-market companies

Several design failures appear repeatedly:

- Pilots built as demos, not workflows. Success is defined by accuracy or impressive outputs, not by reduced cycle time or lower cost per transaction.

- No operational owner. The pilot belongs to IT or analytics, but no business leader is accountable for outcomes.

- Integration deferred. Outputs live in separate tools instead of core systems such as ERP, CRM, or ticketing platforms.

- Governance postponed. Monitoring, logging, and risk controls are treated as future concerns.

- Change management underfunded. Training and role clarity are limited, leading teams to revert to old habits.

These issues are most visible in high-volume, exception-heavy workflows such as case triage, document processing, service operations, dispatch, and compliance reviews.

Why Does This Matter to Executives?

AI Pilot vs. Operational AI—A Practical Comparison

Pilot purgatory has tangible consequences for operations, finance, and risk.

Operational impact

When pilots stall, manual work continues unchanged. Employees bypass AI tools that add steps instead of removing them. Exceptions overwhelm narrow pilot designs. Over time, workflows become more fragmented rather than more efficient.

Financial impact

The financial cost is often underestimated. AI pilots consume software spend, integration effort, and internal labor. When they fail to scale, that investment produces little return. Deloitte reports that nearly half of executives say their AI initiatives deliver less value than expected, which erodes confidence in future funding. For mid-market firms, even one stalled pilot can represent hundreds of thousands of dollars in direct and opportunity costs.

Risk and compliance impact

Pilots that operate without governance create hidden exposure. Decisions lack audit trails, data handling is inconsistent, and accountability is unclear. Gartner highlights inadequate risk controls as a key reason AI projects are abandoned after proof of concept, especially in regulated environments.

How Does AI Actually Apply in Practice?

AI creates value only when it is embedded inside workflows that already matter.

Where AI delivers practical value

- Classification and routing to prioritize cases and assign them to the right queue

- Extraction and normalization to convert documents, emails, and forms into structured data

- Summarization to support faster and more consistent review

- Recommendation to suggest next steps or resolutions

- Monitoring to detect anomalies, drift, or policy violations

What AI does not replace

AI does not replace workflow design, accountability, governance, or adoption. Most production-ready systems rely on review and escalation for exceptions.

Production-ready AI integrates outputs into workflows, triggers actions in systems of record, logs decisions, and measures outcomes using operational KPIs rather than model metrics alone.

What Does This Look Like in the Real World?

Healthcare administration

Healthcare organizations often pilot AI to extract data from prior authorization or denial letters. The model works, but staff still re-enter data manually because outputs are not integrated into revenue cycle workflows. By contrast, production deployments embed document processing end to end, improving turnaround time and consistency at scale.

Field service operations

In field service, pilots frequently recommend optimal schedules or routes. Dispatchers view suggestions in a dashboard but continue using legacy processes. BCG reports that when AI recommendations are embedded directly into dispatch workflows, organizations can achieve 10 to 15 percent productivity gains. The difference lies in workflow integration, not model sophistication.

Logistics and manufacturing

In logistics, AI pilots often generate predictive alerts about delays or shortages. Production-ready systems embed those alerts into exception handling and planning workflows, triggering actions rather than emails. Industry analysis highlights that high-exception environments quickly expose weak pilot design.

How Should Leaders Design AI Pilots That Actually Scale?

Designing an AI Pilot That Can Scale

Organizations that escape pilot purgatory follow a disciplined sequence:

Start with the workflow

Identify where work slows down, breaks, or costs too much.

Define operational success metrics

Focus on cycle time, cost per transaction, error rates, or service levels.

Design review and escalation

Plan how exceptions are handled from the start.

Integrate with systems of record

Avoid parallel tools that undermine adoption.

Establish governance early

Build monitoring and logging into the pilot.

Decide explicitly

Scale, iterate, or stop. No indefinite pilots.

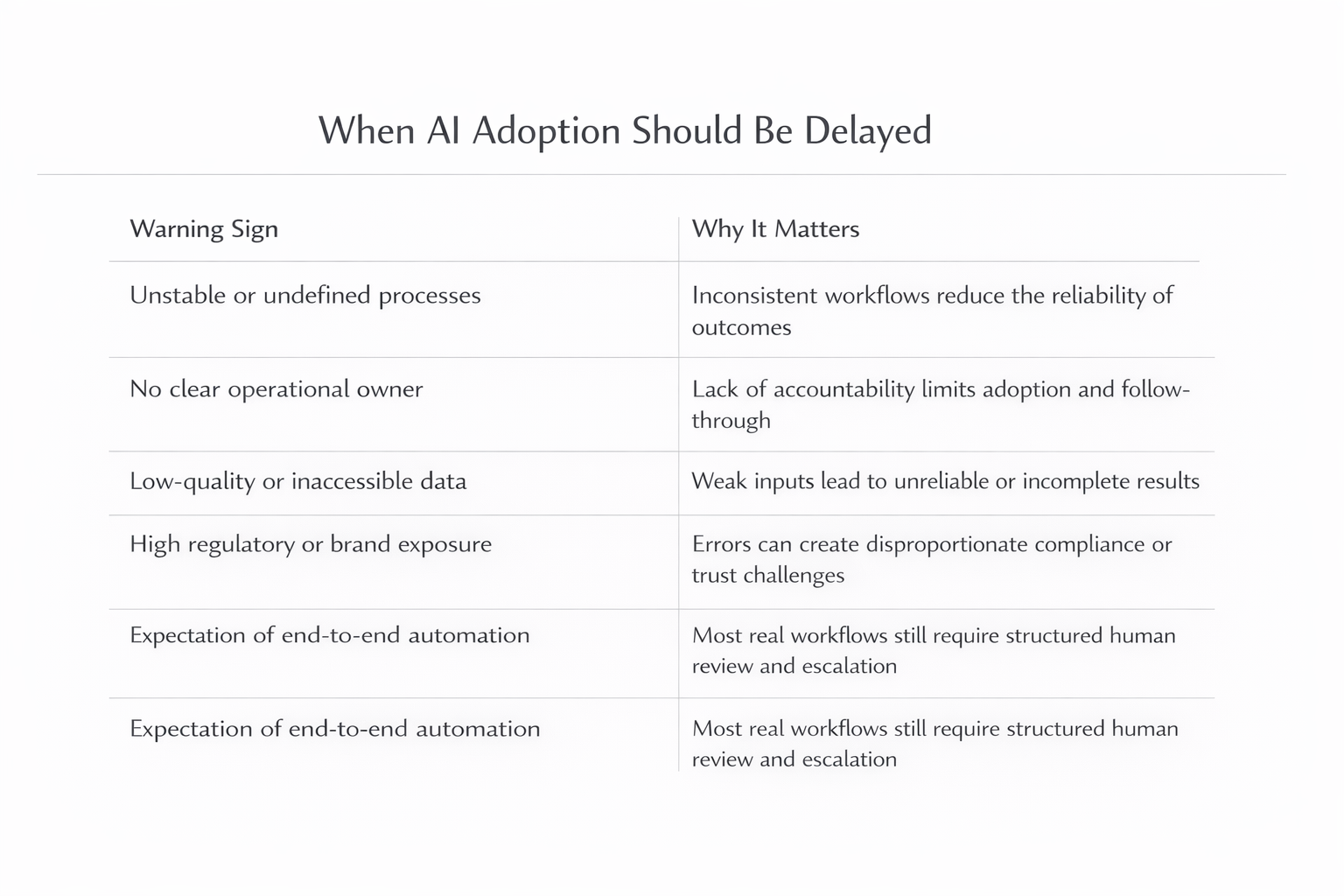

When Is This Approach Not Appropriate?

There are situations where delaying AI is the right decision:

- Processes are unstable or poorly defined

- No clear operational owner exists

- Data quality is low or inaccessible

- Regulatory or brand exposure is high and controls are immature

- Leaders expect end-to-end automation without review

In these cases, process improvement or data cleanup often delivers more value than rushing into AI.

Common Pitfalls and How to Avoid Them

Common pitfalls include measuring success by model accuracy, deferring integration and governance, treating adoption as optional, and underestimating change management.

Avoid these by tying pilots to business KPIs, embedding AI into default workflows, assigning clear ownership, and monitoring performance continuously.

Key Takeaways for Business Leaders

- Most AI pilots fail due to design and operating model gaps, not technology

- Operational AI succeeds when embedded into real workflows with clear ownership

- Measuring business impact matters more than model performance

- Integration, governance, and adoption must be designed from the start

- Treat pilots as early production systems, not experiments

Executive FAQ

Why do pilots that work in demos fail in production?

Because demos test models in isolation, while production requires integration, ownership, governance, and adoption.

How long should an AI pilot last?

Long enough to validate real operational impact—typically weeks or a few months, not indefinitely.

Do we need advanced AI to get value?

No. Many high-ROI use cases rely on simple models combined with strong workflow design.

Ready to Design AI Pilots That Scale?

Sentia Digital helps organizations identify the right AI opportunities and design pilots that are ready for production from day one.

Start an AI Opportunity Assessment →